If you've searched for an independent breakdown of the Webris technical SEO audit methodology, you've likely hit a wall. The top results are either Webris's own services page (built to convert, not to educate), a broken 404 from bizfylr.com, or a locked Scribd document preview that shows almost nothing. There's no authoritative, third-party resource that explains what the Webris audit framework includes, how it compares to other audit methods, or whether it's the right fit for your business. This article fills that gap.

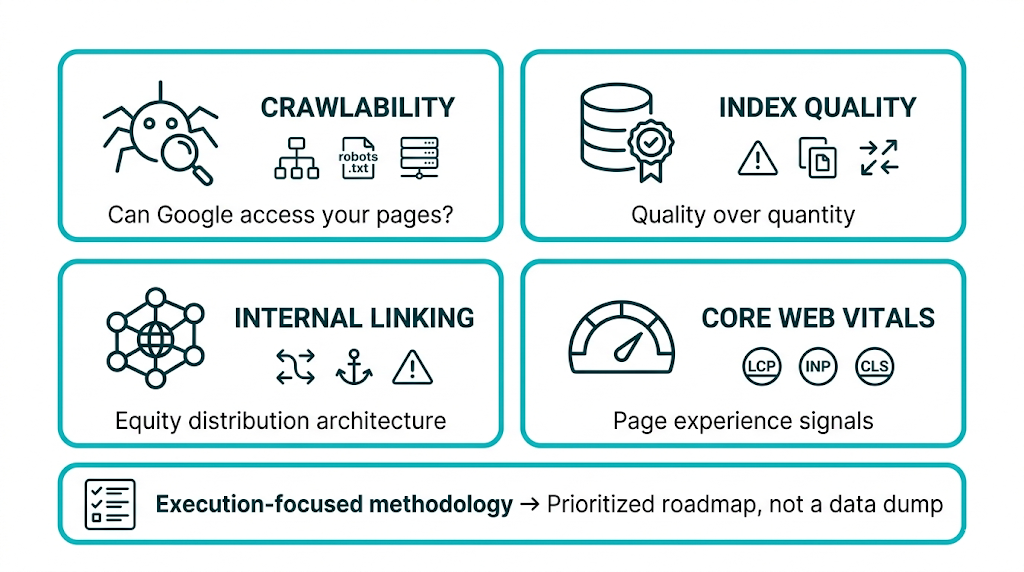

The bottom line: The Webris technical SEO audit is a structured, execution-focused methodology built around crawlability, index quality, internal linking architecture, and Core Web Vitals. It's built as the diagnostic first step in a managed SEO growth system, not a standalone deliverable. The approach prioritises consolidating index quality over expanding index size, and it turns findings into a prioritised roadmap instead of a raw data dump. For SEO managers and marketing directors evaluating whether to hire a full-service partner, run audits in-house, or use automated tools, it helps to know what this framework covers - and where it stops - before committing budget.

This guide is written for sophisticated practitioners. We'll cover the full Webris framework, flag where it excels, and compare it against DIY tools, automated platforms, and boutique agency audits. We'll also walk through how to run your own audit using the same core principles, so you leave with usable next steps regardless of which direction you choose.

What Is a Webris Technical SEO Audit and Who Is It For?

Webris is a Philadelphia-based SEO agency that has built its reputation primarily around law firm SEO and competitive B2B verticals. Their technical SEO audit isn't a templated checklist product you purchase off a shelf. It's the diagnostic entry point into their broader managed SEO service. That distinction matters when you're evaluating what you're buying.

The audit framework Webris uses is built around a core premise: most websites don't have a content problem first, they have a crawlability and index quality problem. That's the starting point. Before any content strategy, link building campaign, or keyword targeting can deliver, search engines have to find, crawl, and correctly evaluate your pages. Webris's audit methodology starts there and builds outward.

Who is it for? It's designed for businesses that are ready to hand off SEO execution entirely. Webris's service page positions the agency around a "Done-For-You Execution" model. They aren't selling an audit report you take back to your in-house team. They sell a diagnostic that feeds straight into their own delivery process.

That makes the Webris approach a strong fit for:

- Law firms and professional services businesses that lack in-house SEO capability and need a partner who owns delivery

- Mid-market companies with existing organic traffic (typically 5,000+ monthly sessions) that are underperforming relative to their domain authority

- Marketing directors who need to show measurable ROI and want documented traffic lifts rather than vanity metrics

- Businesses recovering from algorithm updates or manual penalties where a thorough technical review sets the recovery plan

It fits less cleanly for in-house SEO teams that want to run audits themselves using the same framework. And it doesn't match startups with minimal existing content that need content strategy before technical optimization. The Webris model assumes there's something worth auditing and fixing.

The documented results on Webris's services page reference traffic lifts of +794%, +364%, and +440% across client case studies. Those are big numbers. They also reflect the execution-first model rather than a report-and-walk-away audit. The audit only matters if the fixes ship, and that philosophy sits at the center of the Webris methodology.

The Core Components of the Webris Technical SEO Audit Framework

The Webris technical SEO audit covers four primary domains: crawlability and indexation, internal linking architecture, on-page technical signals, and performance metrics including Core Web Vitals. Each domain feeds the next. A crawlability problem can hide an internal linking problem. A bloated index can pull down performance signals on your strongest pages. The teams that win separate a real audit from a tool dump by tracking how these pieces interact, then fixing them in the right order.

Crawlability and Indexation: The Non-Negotiable Foundation

According to Google Search Central's crawling and indexing documentation, Googlebot discovers URLs through sitemaps, internal links, and external backlinks, then decides independently whether to crawl and index those URLs based on crawl budget, server response, and content quality signals. Two distinct failure modes show up here, and most audits lump them together. That mistake changes the fix, the timeline, and the outcome.

The first failure mode: not crawled at all. Common causes are straightforward: robots.txt blocks, missing sitemap entries, orphaned URLs with no internal links, or pages stuck behind JavaScript rendering Googlebot won't process. The fix is structural. We open crawl paths, correct sitemap inclusions, and add internal links to the affected URLs.

The second failure mode: crawled but not indexed. This is where Webris's framework earns its depth. Google's Search Central documentation explicitly lists reasons a crawled page won't be indexed: thin content, duplicate content (including near-duplicate), soft 404 signals, noindex tags applied incorrectly, and canonicalization conflicts. A page in the "Crawled - currently not indexed" report in Google Search Console is a different class of problem than a page that never gets crawled. Treating those the same ranks as one of the most expensive technical SEO errors we see.

Those two populations drive two different remediation tracks. For uncrawled pages, we prioritize access and discovery. For crawled-but-not-indexed pages, we prioritize content quality signals and canonicalization hygiene. On sites past a few hundred pages, this separation does more than tidy up reporting - it stops teams from spending weeks fixing the wrong thing.

That separation also ties directly to data sources. Standard technical SEO audit guides recommend segmenting GSC coverage data before running any crawl tool, because crawl tool data and GSC data answer different questions. Webris follows that order: start with GSC, then layer in Screaming Frog or similar crawl outputs to validate what Google reports and to find the underlying causes at scale.

Internal Linking Architecture: The Most Underrated Audit Component

Internal linking is the most undervalued part of technical SEO audits. Full stop. It's also where automated tools produce the least useful output because they can count links, but they can't judge intent.

A tool can flag a page with zero internal links pointing to it. It won't tell us whether that URL should be a cornerstone page with 50 internal links, or whether it should be merged into another page and retired. That call requires context: keyword targets, conversion value, cannibalization, and how the site actually sells.

A standard technical audit checklist highlights a pattern we see all the time: the homepage and a handful of product or service pages accumulate PageRank through external backlinks, but that equity never reaches pages that target commercial keywords. The link profile isn't the issue. The internal linking architecture is.

Webris's audit framework maps internal link equity distribution across the crawled URL set and flags three problem buckets:

- Orphan pages - URLs with no internal links, invisible to crawlers without a direct sitemap reference

- Link equity dead ends - pages that receive internal links but don't pass equity forward, often due to noindex tags or canonicalization pointing elsewhere

- Diluted anchor text - internal links using generic anchor text ("click here", "read more") that provide no topical signal to Google about the destination page's relevance

Each bucket needs its own fix. Orphan pages get linked from relevant content, or we consolidate and redirect them. Link equity dead ends get a canonicalization and noindex review so equity flows where it should. Diluted anchor text requires a systematic rewrite of internal link copy across the site. It's tedious work. It also moves rankings.

On a site with 500+ pages, this internal linking analysis often produces ranking lifts without publishing new content or building new backlinks. That's the point. Webris treats internal linking architecture as a primary audit component, not a throw-in at the end. Moz's research on internal links and SEO confirms that strategic internal linking is one of the most effective ways to distribute page authority across a site and signal topical relevance to search engines.

How the Webris Audit Differs from Generic Crawl-Tool Reports

The core problem with automated audits is simple: they spot issues fast, then dump the prioritization on you. A Screaming Frog crawl of a 10,000-page e-commerce site will spit out thousands of flags. Missing H1 tags. Duplicate meta descriptions. Redirect chains. Mixed content warnings. Large image files. Slow server response times. All real problems. But if you work through them in the order the tool lists them, you burn three months cleaning up low-impact noise while the real ranking blockers sit there untouched.

Semrush's Site Audit tool hits the same wall, even with the nicer UI. It gives you a 0 to 100 score and sorts items into errors, warnings, and notices. The scoring model stays generic, though. It doesn't know your 4,000 redirect chains all resolve cleanly, or that those "missing meta descriptions" live on paginated archive pages that should be noindexed anyway. The tool treats everything like a fire drill. You still have to decide what matters.

That decision-making is where the Webris approach separates from DIY, tool-only audits. The framework uses human judgment to triage. A trained auditor reviews crawl output, the GSC coverage report, server log data where available, and the backlink profile in one pass, then ranks fixes based on what's actually blocking organic growth.

Here's what that looks like on a real-world pattern. An agency runs a Semrush audit on a law firm's site and finds 847 pages with duplicate meta descriptions. Semrush marks it as a high-priority error. A Webris-style audit starts somewhere else: how many of those 847 pages are indexed? How many pull any organic traffic? How many should be indexed at all? On a lot of law firm sites, a big chunk of those duplicate-meta URLs are auto-generated practice area location pages. The right fix isn't rewriting meta descriptions one by one. It's consolidation or a noindex decision.

Standard technical SEO audit guides call this out cleanly: experienced auditors use automated tools to collect data, not to set priorities. Tools surface the raw inputs. Auditors decide what moves rankings.

Execution is the other place Webris differentiates: the audit is tied to execution. An independent consultant can hand over a 60-page audit and disappear. Then the client has to interpret the recommendations, fit them into their own dev queue, and guess what "fix the canonical tags" means inside their CMS. Webris keeps the auditor and the implementer under the same roof. That closes the gap between diagnosis and deployment, which is where most technical SEO lifts die.

For agencies and SEO managers weighing options, this is the distinction that matters. A technically perfect audit that never ships is worth nothing.

A good-enough audit that gets implemented fast is worth a lot.

Index Quality vs Index Size: Why Webris Prioritizes Consolidation Over Expansion

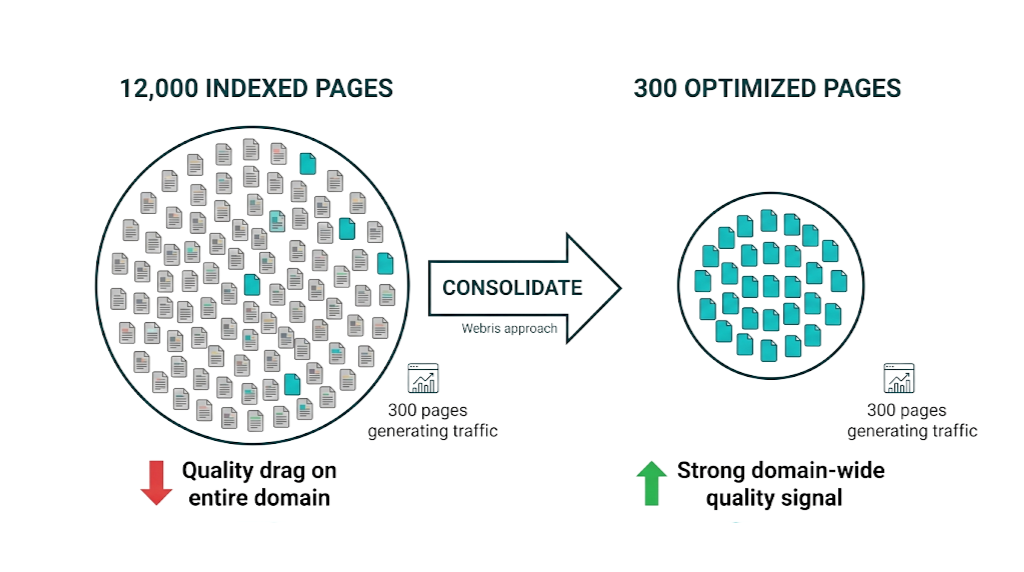

This catches non-technical stakeholders off guard: a site with 12,000 indexed pages where only 300 generate meaningful organic traffic has a real problem. Not because the other 11,700 pages are automatically toxic on their own, but because they drag down the quality signals Google associates with the domain as a whole.

Google evaluates quality at the page level and at the site level. When a large share of indexed URLs are thin, duplicated, or off-topic, you get what SEO practitioners call a site-wide quality drag. Strong pages end up carrying weak ones. The domain-level quality signal drops, and rankings flatten even for pages that deserve to perform.

A standard technical audit checklist treats this as one of the highest-impact findings on large sites. Their framework asks a blunt question: out of your total indexed URL count, what percentage generates at least one click per month in GSC? If it's below 10%, you've got an index quality problem. Links and new content won't solve it until you clean up what's already indexed.

Webris's audit framework puts this analysis front and centre. The output includes a segmented review of indexed URLs by traffic status, and the recommendations fall into three buckets:

URL Category | Traffic Status | Recommended Action |

|---|---|---|

Core pages | Generating consistent clicks | Protect, optimize, build links |

Orphaned content | Indexed but zero traffic, 6+ months | Consolidate or redirect |

Auto-generated pages | Indexed, thin, no unique value | Noindex or canonical to parent |

Duplicate variants | Indexed, near-identical content | Canonical to preferred version |

Paginated archives | Indexed, no direct search intent | Noindex or rel-next/prev handling |

Most SEO content takes the opposite stance and defaults to "publish more" as the main growth lever. But the data supports consolidation. Removing or folding low-quality indexed content has been shown to lift rankings for the pages you keep, because the domain's overall quality signal improves.

For a marketing director running a large content site, the implication is straightforward: before commissioning 50 new blog posts, run an index quality analysis. If 60% of your indexed URLs drive zero traffic, consolidation comes first. New content on a domain with a quality drag problem won't hit its ceiling. Understanding common indexing issues and how to resolve them is an essential part of this process.

This is one of the clearest Webris outputs, and it's where the audit earns its keep versus a generic crawl report.

Page Speed, Core Web Vitals, and the Performance Checks Webris Runs

Performance auditing got harder once Google rolled Core Web Vitals into rankings via the Page Experience update. Google's Page Experience documentation lists three primary CWV metrics: Largest Contentful Paint (LCP), Interaction to Next Paint (INP, which replaced First Input Delay in March 2024), and Cumulative Layout Shift (CLS). Each one tracks a different part of user experience. And each one fails for different reasons, so the fixes look different too.

That CWV pass-fail line catches a lot of sites. The HTTP Archive's Core Web Vitals report shows that in recent data, approximately 50% of mobile experiences pass the Core Web Vitals assessment. Roughly half of all websites fail at least one metric, and mobile keeps dragging behind even though mobile-first indexing has been the default for years.

Mobile speed has a direct cost. Google's own research on mobile page speed, cited in industry reports and sourced from Google's internal studies, found that moving from a 1-second to a 7-second load time increases bounce rate by 113%. That's not a minor UX nit. It's a conversion and engagement problem, and it snowballs as Google's quality signals start reflecting that behavior.

The Webris performance audit covers:

- LCP diagnosis - identifying whether the delay is caused by server response time, render-blocking resources, resource load time, or element render time, because each has a different fix

- INP analysis - reviewing JavaScript execution patterns, third-party script impact, and interaction handling to identify what's causing delayed responses to user inputs

- CLS investigation - finding layout shift sources including images without dimensions, dynamically injected content, web fonts causing FOIT/FOUT, and iframes with unknown dimensions

- Server response time (TTFB) - baseline infrastructure assessment including hosting quality, CDN configuration, and caching headers

- Resource optimization - image format audit (WebP/AVIF adoption), JavaScript bundle analysis, CSS delivery review

That prioritization layer is the difference. It separates the Webris performance check from a basic PageSpeed Insights run in the same way their crawl analysis differs from a Screaming Frog export: the prioritization layer. PageSpeed Insights will tell you to "eliminate render-blocking resources" and "reduce unused JavaScript." It won't tell you which fix is worth 48 hours of developer time and which is worth three. A Webris-style audit groups findings by impact and implementation cost, so dev teams get a work order they can actually run.

Priority changes by environment. For a mid-market SaaS team spending $3k/month on SEO and running on a shared hosting plan with 800ms TTFB, the highest-ROI move is often a hosting upgrade before any code-level optimization. For an enterprise e-commerce site already on a CDN with sub-200ms TTFB, the priority shifts to JavaScript execution patterns and third-party script management. Context drives the queue. That's the value of human-led performance auditing. Semrush's technical SEO audit guide reinforces this point, noting that grouping issues by impact and implementation complexity is what separates a useful audit from a data dump that never gets actioned.

How Webris Translates Audit Findings Into an SEO Growth Roadmap

The audit-to-execution gap kills technical SEO. An agency or consultant delivers a full report. The client's development team reviews it, bumps technical items behind product roadmap work, implements 30% of the recommendations over six months, and then wonders why rankings haven't moved. This isn't hypothetical. It's how audit-only engagements usually end.

That gap is what the Webris framework targets. The audit output isn't a prioritized issue list. It's an SEO Growth Roadmap that sequences recommendations by impact tier, assigns ownership, and ties each fix to an expected outcome. That's the difference between diagnosis and a treatment plan.

The roadmap structure Webris uses follows a three-tier priority system:

Tier 1 - Crawl and index blockers: Issues that are actively preventing Google from accessing, crawling, or indexing key pages. These get fixed first, regardless of implementation complexity, because nothing else works until Google can see the site correctly.

Tier 2 - Equity and signal optimization: Internal linking fixes, canonicalization corrections, content consolidation, and structured data improvements. These build on the Tier 1 fixes by making sure equity and signals flow to the right pages once crawl access is in place.

Tier 3 - Performance and experience improvements: Core Web Vitals fixes, image optimization, server configuration, and schema markup expansion. These stack on top of Tier 1 and Tier 2 and improve the user experience signals that feed into Google's quality assessment.

That sequencing is the whole point. Fixing Core Web Vitals on pages that aren't being indexed wastes effort. Improving internal linking to pages with canonicalization conflicts pushes equity into a dead end. The Webris roadmap forces the order of operations.

The documented traffic lifts on Webris's services page (+794%, +364%, +440%) reflect this execution model. These aren't audit-only results. They're the output of a complete diagnostic-to-implementation cycle where the agency controls both diagnosis and execution. That's a different value proposition than report-and-handoff, and you should treat it that way when you pick an audit approach.

That said, some teams won't need it. For businesses that already have strong in-house development and SEO capability, the Webris model may be more than you need. But for organisations where the audit-to-execution gap has repeatedly stalled SEO progress, the integrated approach earns its premium. Teams looking to outsource SEO effectively should weigh this execution model carefully before committing to any partner.

Webris Technical SEO Audit vs Competing Audit Methodologies: A Direct Comparison

No current content ranking for this keyword does a direct named comparison of audit methodologies. That's a gap we're filling here, because the decision between audit approaches is one of the most practically important choices an SEO manager or marketing director makes.

Methodology | Who It's For | Prioritization Quality | Execution Support | Typical Cost Range |

|---|---|---|---|---|

Webris full-service audit | Law firms, B2B, mid-market | High (human-led) | Full managed execution | $2,500-$5,000+ per month on a retainer |

Screaming Frog DIY | In-house teams with SEO expertise | Low (manual triage required) | None | $259/year licence |

Semrush Site Audit | Teams wanting automated scoring | Medium (generic scoring) | None | $130-$500/month platform |

Boutique agency audit | SMBs wanting expert analysis | High (human-led) | Report + recommendations | $1,500-$5,000 one-time |

RhinoRank technical + link building | Agencies and in-house teams | High (integrated approach) | Link building execution | Varies by scope |

Screaming Frog DIY is the right choice if you have a trained SEO on staff who can triage the data, write remediation specs, and run developer implementation. The tool itself is excellent. But it doesn't come with judgment. If that judgment already sits in-house, DIY is the cleanest cost play.

Semrush Site Audit works as a monitoring layer and for fast health checks. It doesn't replace a real audit. The scoring system rewards easy wins like meta description length and image alt text, and it doesn't weight issues by their ranking impact. An 85/100 Semrush score can still sit on top of crawl budget waste or index quality problems that the tool won't surface cleanly.

Boutique agency audits sit in the middle. A specialist boutique (or a similar independent agency) can produce sharp analysis, and the industry context is often stronger than what you get from a generalist shop. That value comes with a constraint: handoff. You get the report. Your team owns the backlog, dev tickets, QA, and follow-through.

The Webris model makes sense when the same team needs to own diagnosis and execution, and you're competing in a vertical where the audit kicks off a 12-24 month growth programme rather than closing out a one-time project.

Where Rhino Rank fits into this picture needs plain language. Our strength is the off-page layer that follows a solid technical foundation. Once a Webris audit (or any thorough technical audit) fixes crawl, index, and site architecture issues, the next growth lever is authority through links. A clean site - strong internal linking, healthy indexation, no major crawl friction - will respond to a structured link building campaign in a way a technically broken site won't. Technical SEO and link building don't compete. They run in sequence.

What a Webris Technical SEO Audit Actually Costs and What You Get

Webris doesn't publish a standard pricing page for standalone audits, which matches their managed service model. Based on publicly available information and industry benchmarks, their engagements run on monthly retainers rather than one-time audit fees. The audit functions as onboarding for an ongoing relationship, not a standalone deliverable you buy and file away.

That retainer model matters.

For context on webris pricing, managed SEO retainers in competitive verticals like law firm SEO usually start around $2,500-$5,000 per month and scale with competitiveness and scope. That's in line with what other execution-first agencies charge for similar work. For a broader view of what SEO services cost across different models, our link building cost guide covers the full pricing landscape.

What you get for that spend, based on the Webris services page and the documented methodology:

- Full technical audit coverage across crawlability, indexation, internal linking, on-page signals, and Core Web Vitals

- A prioritized SEO Growth Roadmap with sequenced recommendations and expected outcomes

- Implementation managed by the Webris team, rather than being tossed to your developers as a PDF

- Content strategy tied back to what the audit finds

- Digital PR and link building as part of the broader growth programme

- Reporting tied to traffic and conversion metrics, not vanity metrics

This model fits teams that want one partner to run the whole engine. If you already have the in-house SEO lead and dev capacity, paying for managed execution is usually the wrong spend. In that case, a one-time technical audit and roadmap from a boutique agency or specialist consultant in the $1,500-$4,000 range is the better fit.

The Webris model is built for clients who want to hire their last SEO agency, not their next audit vendor.

How to Run Your Own Technical SEO Audit Using the Webris Framework

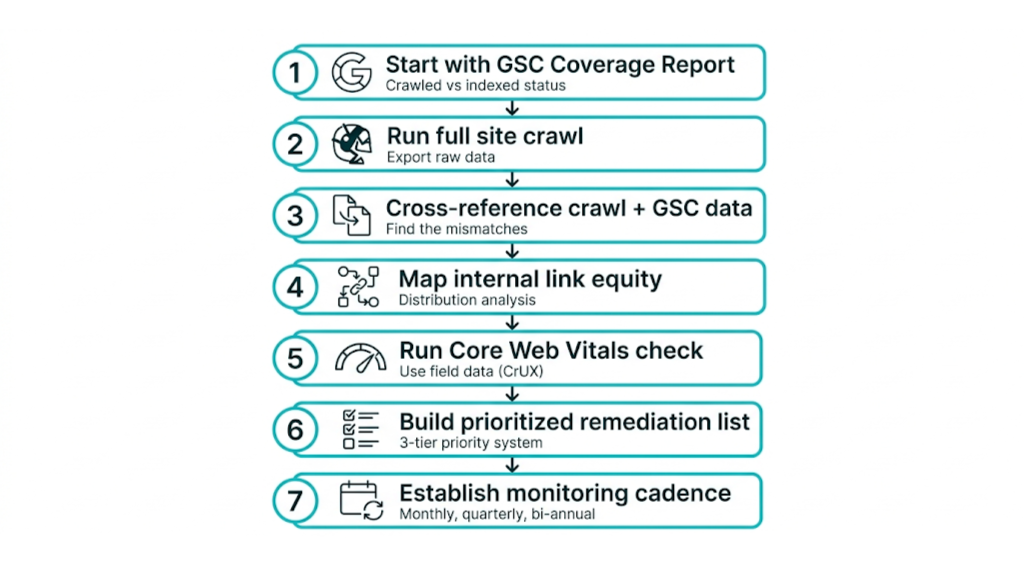

If you want to apply the core principles of the Webris technical SEO audit methodology to your own site, the framework is reproducible as long as you use the right tools in the right order. This isn't a shortcut to a professional audit. It is a repeatable process that surfaces the highest-impact issues on most sites.

Step 1: Start with Google Search Console data, not a crawl tool.

Pull the Coverage report and segment URLs into four groups: Valid, Valid with warnings, Excluded, and Error. Start with "Crawled - currently not indexed" and "Discovered - currently not indexed". Those two buckets tell you where Google is spending time without returning indexation. Nail down the failure mode before you run a single crawl. You'll save hours.

Step 2: Run a full Screaming Frog crawl and export the raw data.

Configure Screaming Frog to crawl JavaScript if your site uses client-side rendering, follow all redirects, and include response codes. Export the full URL list with status codes, indexability status, canonical URLs, and internal link counts. This export becomes your working dataset. Keep it raw.

Step 3: Cross-reference crawl data with GSC data.

That working dataset is only useful once it sits next to Google Search Console. If you're new to the platform, our guide on using Google Search Console covers the key reports you'll need. Merge the Screaming Frog export with your GSC URL data and flag the mismatches that matter:

- Pages that are crawlable and indexed but have generated zero clicks over 12+ months.

- Indexed pages that aren't in your sitemap. Usually a sitemap hygiene issue, sometimes a sign of thin or legacy URLs hanging around.

- Sitemap URLs returning non-200 status codes.

- Canonical tags pointing to different URLs than GSC is indexing.

Step 4: Map internal link equity distribution.

Those mismatches often trace back to internal linking. Using Screaming Frog's inlinks report, pull your top 20 pages by inbound internal link count. Compare that list against your priority commercial and informational targets.

If your most internally linked page is your privacy policy, you've got a structural problem. Fix the structure, not the symptoms.

Build a simple spreadsheet that maps each priority page to its current internal link count and its target link count. Make the target count a real number you can execute against, not a vague "needs more links."

Step 5: Run Core Web Vitals assessment using field data.

Field data is the part Google uses. PageSpeed Insights gives you lab data, which helps with debugging, but it isn't the source of your Core Web Vitals pass/fail.

Use the CrUX, the Chrome User Experience Report, inside Google Search Console's Core Web Vitals report to review real-world field data for your URLs. Identify pages failing LCP, INP, or CLS thresholds. Once you have the failing URLs, go back to PageSpeed Insights to isolate the contributing factors on those templates. Template-level fixes beat one-off page tweaks every time.

Step 6: Build your prioritized remediation list.

Your remediation list should read like an engineering work order. Apply the three-tier priority system:

- Tier 1: crawl access and indexation blockers.

- Tier 2: internal linking and canonicalization fixes.

- Tier 3: performance improvements.

Assign each item an estimated implementation time and an expected impact rating - high, medium, or low. This is your website audit checklist turned into a build plan that a dev team can actually run. Our technical SEO checklist covers the full range of items to include at each stage.

Step 7: Establish a monitoring cadence.

A build plan only holds if you keep checking it. Technical SEO isn't a one-time event. Sites change. New content ships, plugins update, redirects break, and canonicalization errors creep back in.

Set a monthly GSC coverage review, a quarterly Screaming Frog crawl, and a bi-annual deep audit. Use an SEO audit template to standardise what you check each cycle so nothing falls through the gaps.

That same cadence gets harder when you're managing multiple clients. For those teams, a local SEO audit template that adds GMB signal checks, NAP consistency audits, and local citation coverage to the core technical framework belongs in a separate workflow. The technical foundations are the same. Local signals demand extra inputs.

Once the foundation is solid - crawl access clean, index quality high, internal linking structured, Core Web Vitals passing - the next growth lever is authoritative link acquisition. This is where Rhino Rank's curated link building integrates cleanly with the technical work you've done. A site that passes the technical audit framework above is ready to turn link equity into rankings. A site that hasn't done this work will see diminishing returns, even with a strong link profile.

Frequently Asked Questions About Webris Technical SEO Audits

What is a Webris technical SEO audit and what does it include?

A Webris technical SEO audit is a diagnostic pass across crawlability, indexation quality, internal linking architecture, on-page technical signals, and Core Web Vitals. It isn't sold as a one-off deliverable. It's the first step in Webris's managed SEO service.

The output is a prioritized SEO Growth Roadmap. That roadmap sequences fixes by impact tier and moves straight into the agency's implementation workflow, so the audit doesn't sit on a shelf.

This setup fits businesses in competitive verticals, especially law firms and professional services, that want a full-service partner rather than a report-and-handoff engagement.

How does the Webris technical SEO audit framework differ from automated tool reports like Semrush or Ahrefs?

The difference is prioritization. Tools like Semrush Site Audit spit out long issue lists scored by a generic algorithm, and those scores don't reflect your site context, your competitors, or what the business needs first.

Webris applies human judgment to triage. That means separating crawled-but-not-indexed from not-crawled-at-all, grouping indexed URLs by real traffic contribution, and ordering fixes by ranking impact instead of tool severity.

Those tool reports still matter. They collect data. The Webris audit uses that data to diagnose and plan.

What are the most important components of a technical SEO audit?

The highest-impact components, in typical priority order, are: crawlability and indexation diagnosis (including the key distinction between pages that aren't crawled and pages that are crawled but not indexed), internal linking architecture (equity distribution and anchor text quality), index quality analysis (identifying and consolidating low-value indexed URLs), canonicalization hygiene, and Core Web Vitals performance.

Internal linking and index quality drive rankings more than most audits admit. A lot of audits still treat them like secondary checks, then over-focus on long error lists that don't move the needle.

How much does a Webris technical SEO audit cost?

Webris doesn't offer standalone audit pricing. The audit sits inside a monthly retainer, and it's used to onboard and kick off an ongoing managed SEO engagement.

For comparable full-service agencies working in competitive verticals, retainers usually start around $2,500-$5,000 per month based on industry benchmarks.

If you only need the audit and roadmap without ongoing execution, a boutique agency audit priced around $1,500-$4,000 as a one-time project will fit better.

How often should a technical SEO audit be performed?

Run a full technical audit at least once per year. Between those annual audits, stick to quarterly crawl health checks and monthly Google Search Console coverage reviews.

High-change sites need a tighter cadence. If you're publishing frequently, managing a large e-commerce catalogue, or coming off a CMS migration or site restructure, quarterly full audits make sense.

Core Web Vitals is its own moving target. Monitor field data continuously in GSC performance reports, because regressions can show up after any site update or third-party script change.

related Blog Posts

Join 2,600+ Businesses Growing with Rhino Rank

Sign Up

Stay ahead of the SEO curve

Get the latest link building strategies, SEO tips and industry insights delivered straight to your inbox.

Back to all posts

Back to all posts