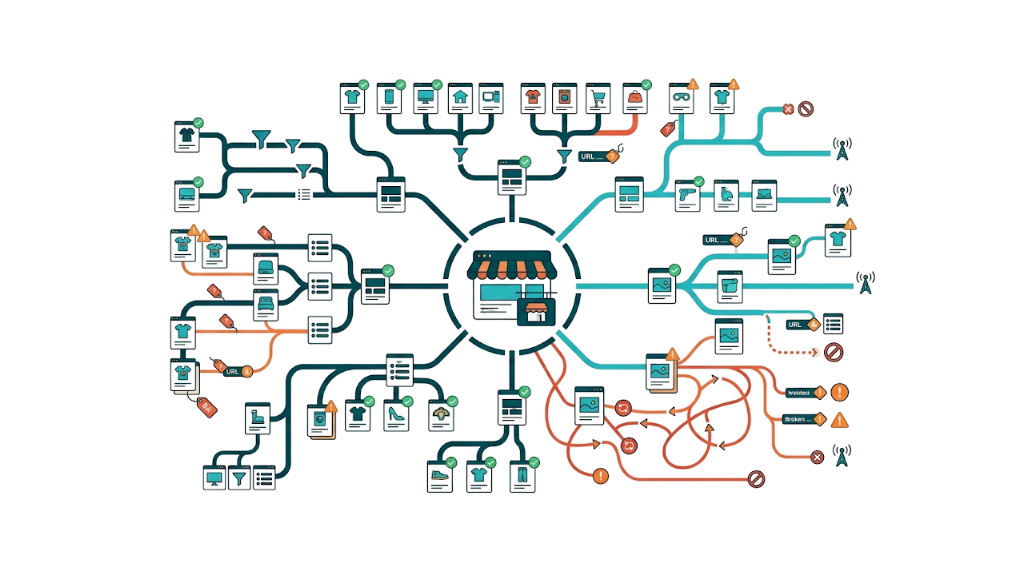

Ecommerce is not a forgiving environment for technical debt. A mid-sized online store with 10,000 SKUs, multiple product variants, and layered filter navigation creates more crawl and indexation complexity than most content sites ten times its size. Google's crawler has a finite appetite for any given domain, and once that appetite gets burned on duplicate filter URLs, paginated category pages, and parameter-bloated product variants, your revenue pages stop getting indexed.

This isn't theoretical. It's the day-to-day reality for ecommerce SEO managers working on stores built on Shopify, WooCommerce, or Magento.

The bottom line: Ecommerce technical SEO needs a different playbook than standard site optimization. The core challenges - crawl budget management, faceted navigation control, product page lifecycle decisions, structured data at scale, and platform-specific limitations - decide which pages rank, how fast they load, and how much organic revenue the store brings in. This guide covers every major technical lever, ties each one to a business outcome, and gives you a practical framework for auditing and fixing the issues that move the needle. If you're an in-house SEO manager, a marketing director managing an agency, or an ecommerce SEO consultant advising multiple clients, this is the reference we keep coming back to.

US ecommerce revenue is projected to reach $2.1 trillion, according to industry analysis of the sector. The brands that take outsized organic share won't do it on content and backlinks alone. They win because their technical foundations let Google crawl, index, and rank product pages without wasting time on junk URLs. That's the advantage this guide helps you build.

Why Ecommerce Technical SEO Is a Different Beast to Standard Site Optimization

Standard technical SEO guidance - the kind found in Google's SEO Starter Guide - treats websites like fairly static collections of pages with clean hierarchies and controlled URL counts. That framing collapses the moment you apply it to a real ecommerce catalog.

An online store with 5,000 products, each available in four colours and three sizes, doesn't have 5,000 pages to manage. It has a potential URL surface area in the hundreds of thousands once you factor in filter combinations, sorting parameters, pagination, and session-based URL variations. Every one of those URLs forces a call: should Google crawl it, should it index it, and does the setup consolidate equity to the right canonical? Most ecommerce platforms ship with weak defaults on all three.

Commercial stakes raise the cost of every mistake. A blog with duplicate content gives up some ranking potential. An ecommerce store with duplicate content across product variants loses rankings on pages that print money. When a category page for "women's running shoes" can't rank because crawl budget got burned on 400 filter URLs nobody searches for, the impact shows up in transactions, not just traffic.

The technical SEO challenges unique to ecommerce break into several categories that standard guides don't address:

- Faceted navigation: Filter systems blow up URL volume and can eat an entire crawl budget.

- Product page lifecycle: SKUs go out of stock, get discontinued, or get replaced. Each case needs its own technical response.

- Variant duplication: Colour, size, and material variants often produce near-identical pages that compete against each other.

- Structured data at scale: Product schema across thousands of pages needs a process. Manual tagging doesn't hold up.

- Platform constraints: Shopify's forced URL structure causes trade-offs. WooCommerce tag pages can get indexed. Magento layered navigation brings its own technical debt that generic guides skip.

Amazon's internal research, widely cited across the industry, found that 100ms of additional page latency costs 1% in sales. For a store doing £500,000 in monthly organic revenue, a two-second slowdown isn't a Core Web Vitals warning - it's a £10,000 monthly revenue problem. That's the lens for every technical decision in this guide.

Link equity makes the gap wider. Ecommerce stores pick up backlinks to product pages, category pages, and brand pages over years. Poor technical choices - 302 redirects instead of 301s, canonical tags pointing at the wrong variant, discontinued pages returning 404s instead of redirecting - drain that equity in silence. Getting it back takes technical fixes and, in many cases, deliberate link building to push authority back into the commercial pages that matter.

Site Architecture: How to Structure an Ecommerce Store for Crawlers and Customers

Good ecommerce site architecture has to work for two groups at once: shoppers moving from the homepage to a product page, and search engine crawlers mapping topical relevance and how internal link equity flows. Miss either one and the other usually suffers too.

Start with a shallow hierarchy. Every product page needs to be reachable within three clicks from the homepage. That's not just a user experience preference - it's crawl efficiency. Pages buried five or six levels deep get less internal PageRank, see less frequent crawling, and struggle to compete in the SERPs. On bigger catalog, that three-click target only happens with intentional category design, not the default taxonomy most ecommerce platforms spit out.

A well-structured ecommerce hierarchy looks like this:

Homepage

├── Category (e.g., /running-shoes/)

│ ├── Subcategory (e.g., /running-shoes/womens/)

│ │ └── Product (e.g., /running-shoes/womens/asics-gel-kayano-30/)

├── Brand (e.g., /brands/asics/)

└── Blog (e.g., /blog/how-to-choose-running-shoes/)Internal linking needs to back up that hierarchy. Category pages should link down to subcategories and featured products. Product pages should link up to the parent category and across to related products where it makes sense. Breadcrumb navigation - implemented with BreadcrumbList schema - pulls double duty: navigation for humans and structured data for search engines. For a deeper look at how internal link building reinforces site architecture, the principles apply directly to ecommerce category hierarchies.

Silo structure matters for topical authority. If a store sells sports equipment, keep running gear, cycling gear, and gym equipment in distinct silos. Let internal links flow mainly within each silo instead of constantly crossing between them. Google reads that structure as a cleaner relevance signal, and you keep link equity concentrated on the pages that drive revenue.

Very large catalog change the math. For a fashion retailer with 50,000+ active SKUs, a perfectly flat architecture won't hold up without crawl waste creeping in. The practical approach is tiered: keep the top-performing categories and subcategories shallow, then push depth where you have to. And the highest-revenue product pages should sit at the shallowest possible level within their category tree.

Crawl Budget Management for Large Product Catalogs

Google assigns a crawl budget to every site based on authority and server capacity. Per Google's own crawl budget documentation for large sites, crawl budget becomes a real constraint once you have more than a few thousand URLs - most ecommerce stores hit that mark quickly as the catalog expands.

Crawl budget has two components: crawl rate limit (how fast Googlebot crawls without stressing the server) and crawl demand (how much Google wants to crawl based on popularity and freshness). Most ecommerce teams obsess over rate limit and ignore demand. That gap shows up as Googlebot spending time where it shouldn't.

The practical priorities for managing crawl budget on a large ecommerce catalog:

- Block low-value URLs in robots.txt: Internal search result pages, cart and checkout pages, account pages, and wishlist URLs should all be disallowed. They don't rank, and they burn crawl budget that belongs on product and category pages.

- Consolidate paginated category pages: Use rel="next" and rel="prev" annotations where appropriate, and make sure paginated pages either pass link equity to the canonical category page or get excluded from indexation.

- Audit your XML sitemap: Only include indexable, canonical URLs. If the sitemap is full of noindex pages, redirect chains, or duplicates, it signals sloppy site hygiene and wastes crawl allocation.

- Monitor crawl stats in Google Search Console: The Crawl Stats report shows where Googlebot spends time. If 40% of crawl time goes to filter URLs, then 40% of your budget produces zero ranking value.

A practical benchmark: on a site with 20,000 indexable product and category pages, Googlebot should spend at least 80% of crawl time on those pages - not on parameter variants, internal search results, or duplicate content. Hitting that ratio takes ongoing robots.txt work and tight canonical discipline, not a one-and-done sitemap submission.

URL Structure and Parameter Handling: Stopping Faceted Navigation From Cannibalizing Your Crawl

Faceted navigation - the filter systems that let shoppers narrow results by size, colour, brand, price, and rating - is one of the strongest UX features in ecommerce. Left unmanaged, it turns into a technical SEO problem fast. Ahrefs' breakdown of ecommerce product page SEO highlights parameter handling as one of the most impactful levers available to ecommerce teams working at scale.

A category page for "men's trainers" with 12 filter dimensions (brand, size, colour, width, surface, feature, price range, rating, availability, material, technology, and collection) can generate millions of URL combinations. Even a modest filter system with five dimensions and five options each produces 3,125 potential URLs from a single category page. Google will try to crawl a lot of them. And it won't pick the ones you want.

The ecommerce URL structure decision comes down to three approaches:

Approach | Mechanism | Best For | Risk |

|---|---|---|---|

Parameter blocking | robots.txt disallow for | Quick wins on parameter-heavy sites | Blocks legitimate paginated URLs too |

Canonical tags | Filtered URLs canonicalise to the base category | Preserving link equity from filtered pages | Googlebot may ignore canonicals if pages differ too much |

JavaScript rendering | Filters applied client-side, no URL change | Cleanest crawl profile | Adds JS dependency, can break crawl entirely |

Dedicated filtered pages | Specific high-value filter combos get indexable URLs | Capturing long-tail filter searches | Requires careful selection to avoid proliferation |

Those approaches aren't mutually exclusive. A standard parameter handling framework recommends a hybrid setup: block parameters that create pure sorting or display variants (e.g., ?sort=price_asc, ?view=grid), canonicalise filter combinations that generate duplicate or near-duplicate content, and only allow indexation for filter combinations with real search demand.

Search demand is the line in the sand.

That third bucket - indexable filtered pages - is where long-tail revenue sits. A page like /running-shoes/womens/waterproof/ can rank for "waterproof women's running shoes" if it's indexed, has unique content, and earns links. Understanding what makes a good backlink profile helps you decide which filtered pages are worth building authority toward.

The implementation process for a Shopify or WooCommerce store:

- Audit current parameter URLs using Screaming Frog with "Crawl all URLs" enabled - identify which parameter types generate the most URL variants

- Check Google Search Console for which parameter URLs are being indexed and whether they're generating impressions

- Classify parameters into three buckets: block (no value, high volume), canonicalise (some value, moderate volume), and allow (genuine search demand, low volume)

- Implement Google Search Console's URL Parameters tool as a secondary signal, but don't rely on it exclusively - canonical tags and robots.txt are the primary controls

- Monitor crawl stats monthly for the first three months post-implementation to verify crawl budget is redistributing to priority pages

Crawl budget only improves if Google stops wasting time on junk URLs and starts spending it on money pages.

One risk teams miss: if your filtered pages have picked up backlinks - which happens when users share or bookmark filter URLs - blocking or canonicalising them without proper redirect handling drops that link equity. Check inbound links to filter URLs before you roll out blanket blocks. Then decide, URL by URL, whether that equity should flow via a redirect, a canonical, or an indexable page.

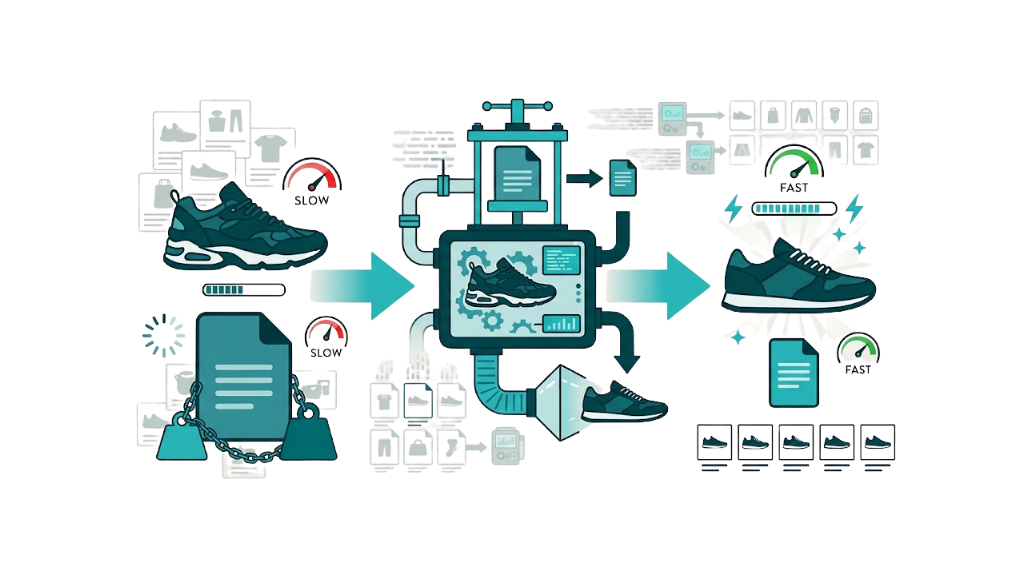

Page Speed and Core Web Vitals: The Direct Line Between Load Time and Lost Revenue

Amazon's research that 100ms of latency costs 1% in sales gets quoted so often it's basically industry folklore, but the principle holds up across independent studies. Google's own data shows that as page load time rises from one second to five seconds, the probability of a mobile user bouncing rises by 90%. For an ecommerce store where the product page is the conversion destination, that bounce jump turns into lost revenue.

Lost revenue is the part that focuses teams.

Core Web Vitals became a confirmed Google ranking signal in 2021, and the metric set changed in March 2024 when Interaction to Next Paint (INP) replaced First Input Delay (FID) as the interactivity metric. INP measures the latency across all interactions during a page session, not just the first one. That raises the bar for ecommerce product pages, where add-to-cart, image galleries, size selectors, and reviews all need to respond cleanly.

The three Core Web Vitals metrics and their thresholds for ecommerce pages:

Metric | Good | Needs Improvement | Poor | Ecommerce Impact |

|---|---|---|---|---|

Largest Contentful Paint (LCP) | Under 2.5s | 2.5s - 4s | Over 4s | Product image loading speed |

Cumulative Layout Shift (CLS) | Under 0.1 | 0.1 - 0.25 | Over 0.25 | Page jumping as images/ads load |

Interaction to Next Paint (INP) | Under 200ms | 200ms - 500ms | Over 500ms | Add-to-cart, filter, gallery responsiveness |

For most ecommerce stores, LCP is the main fight. The largest contentful element on a product page is almost always the hero image, and that image is often unoptimised, served from a slow origin, and not preloaded. Fix LCP at the template level and you usually see the biggest single lift in Core Web Vitals across the whole catalog.

That template-level work also exposes CLS issues.

CLS hits ecommerce stores that load promotional banners, cookie consent bars, or dynamic pricing after the initial render. A product page that jumps when a CSS-styled "10% off" banner loads above the fold creates a bad user experience and a bad CLS score at the same time. The fix is unglamorous: set explicit dimension attributes on images and reserve space for anything that appears after load.

INP demands attention for interactive ecommerce features. A product page with a JavaScript-heavy size selector that blocks the main thread for 400ms when a user taps "Size 10" will fail INP even if it passes LCP and CLS. INP needs real-user monitoring (RUM) data, not just lab tools like PageSpeed Insights, because it reflects interaction patterns that synthetic tests won't capture.

The priority order is straightforward: fix LCP first (product image delivery and server response time), then CLS (dimension attributes and reserved space for dynamic content), then INP (JavaScript execution and main thread blocking). Do it in that order and each round of fixes makes the next one easier to land.

Image Optimization for Product Pages: WebP, Lazy Loading, and Alt Text at Scale

On most ecommerce sites, product images create the biggest performance drag. They also tend to be the most neglected SEO asset. A 2MB JPEG hero image that could ship as a 180KB WebP file costs page speed and crawl efficiency.

WebP format adoption should sit at the top of your image optimization backlog. WebP cuts file size by 25-35% versus JPEG at the same visual quality, and support is now standard across Chrome, Firefox, Safari, and Edge. Most ecommerce platforms either output WebP by default or get there through an image CDN. Shopify serves WebP automatically through its CDN. WooCommerce needs a plugin or a CDN setup. Magento 2.3+ supports WebP via built-in image optimization settings.

Lazy loading defers off-screen images until the user scrolls. Use the native loading="lazy" attribute - no JavaScript library required. One rule matters here: never lazy load the hero product image. That hero image usually becomes the LCP element, and lazy loading pushes the LCP out, which drags down Core Web Vitals. Keep lazy loading for secondary product images, related product thumbnails, and review images.

Alt text at scale is where image SEO falls apart for ecommerce teams. Writing unique alt text for 50,000 product images won't happen. Templates will. A format like {Product Name} - {Colour} - {Brand} applied across the catalog produces descriptive, keyword-aligned alt text without manual work. Variant images need the variant attribute in the string. "Asics Gel-Kayano 30 - Midnight Blue - Asics - Women's Running Shoe" gives screen readers and Google Image Search far more to work with than an empty alt attribute or a filename. You can also build backlinks with images by making product photography genuinely linkable - a tactic that compounds the SEO value of the image investment.

Indexation Control: Making Sure Google Sees the Right Pages - and Ignores the Rest

Indexation control means deciding which pages Google should index, and which pages stay out. Most ecommerce stores never make that call - they let platform defaults decide. Those defaults fail in most setups.

Pages that belong in the index for a typical ecommerce store include the homepage, category pages, subcategory pages, product pages for canonical variants, brand landing pages, and editorial content like buying guides or blog posts. Everything else belongs out of the index: account pages, cart, checkout, wishlist, internal search results, filter parameter URLs, and paginated duplicates.

The mechanism for exclusion matters. robots.txt, noindex tags, and canonicals do different jobs. Treating them as interchangeable causes index bloat and weakens duplicate handling.

- robots.txt disallow blocks crawling at the URL level. If external links point at the URL, Google can still index it based on those links.

- noindex meta tag keeps a page out of the index while still allowing crawling. Use it for pages you want excluded but still want Googlebot to access, like paginated pages that canonicalise to the category.

- Canonical tags consolidate signals to one indexable URL. Use canonicals for variants, filter URLs, and parameter-driven duplicates.

Canonicals only work if Google can fetch the page. Blocking a URL in robots.txt while also pointing it to a canonical target is a common misstep. If Googlebot can't crawl the source page, it can't see the canonical tag, and the canonical signal doesn't count. Let robots.txt allow crawling, then let the canonical tag handle the indexation choice.

For a practical ecommerce indexation audit, run a Screaming Frog crawl and check:

- 200 status pages with noindex tags. Confirm they're excluded on purpose.

- XML sitemap URLs returning noindex. Pull them from the sitemap right away.

- Self-referencing canonicals. Make sure those are the versions you want indexed.

- Canonicals pointing to other URLs. Confirm the target is indexable and returns 200.

- Orphan pages that still show as indexed. They have no internal links, so crawlers won't rediscover them and they'll drop over time.

Handling Out-of-Stock and Discontinued Product Pages Without Losing Link Equity

Product page lifecycle management is one of the most searched and least well-answered topics in ecommerce SEO. The current top-10 results for queries like "what to do with out of stock product pages SEO" tend to stay vague. We won't. This is the decision framework we use.

The three scenarios require three different technical responses:

Scenario 1: Temporarily out of stock (expected to return)

Keep the page live and indexed. Period.

Add clear messaging that the product is temporarily unavailable, include an email notification signup, and, where it fits, add related in-stock alternatives. Do not noindex. Do not redirect. The URL keeps its ranking potential and link equity, and when stock returns, it rebounds faster than a page you removed and later put back.

Scenario 2: Permanently discontinued - no backlinks or ranking value

A 404 is fine if the page has no external backlinks, no meaningful organic traffic, and no rankings worth keeping. Google drops it from the index over time.

That "no backlinks" check has to be real, not a guess. Use Ahrefs or Semrush and confirm there are zero inbound links before you serve a 404.

Scenario 3: Permanently discontinued - with backlinks or ranking history

This is the one that hits revenue. A product page with 15 referring domains and a history of ranking for commercial terms carries accumulated link equity. A 404 wipes that out.

The right move is a 301 redirect to the closest match:

- Replacement product, if there is one.

- Parent category, if there isn't.

- A curated "similar products" landing page when the category is too broad and you'd otherwise dump users onto a generic shelf page.

That redirect choice matters because Google treats status codes differently. Google's HTTP status code guidance from Search Central states that 301 redirects pass link equity to the destination URL. A 302 redirect doesn't pass equity reliably. A 404 loses it entirely. If your store has earned product-page links through PR campaigns, influencer partnerships, or organic link acquisition, the redirect plan for discontinued products isn't a minor technical cleanup. It's link equity preservation at scale. Our managed service includes ongoing redirect auditing as part of the technical link equity work we do for ecommerce clients.

Link equity preservation also comes up when there's nowhere reasonable to redirect. If a discontinued product page ranks for a high-volume query and you don't have a clean destination, convert it into a "discontinued product" page with a clear explanation, related alternatives, and a canonical tag pointing to itself. That keeps the ranking signal while setting the right expectation for users.

Structured Data for Ecommerce: Schema Markup That Earns Rich Snippets and Drives Click-Through

Structured data is how ecommerce sites send product details to Google in a machine-readable format. Done right, it enables rich snippets that lift click-through rate without needing a ranking gain. A listing that shows star rating, price, and availability in the SERP wins more clicks than a plain result sitting in the same position. Understanding SERP feature types helps you see exactly which rich results are available to product pages and what each one requires.

Rich snippets start with Google's requirements. Google's Product structured data documentation lays out the schema types and properties that qualify for rich results in Google Search. The core Product schema properties that ecommerce sites should implement:

Property | Required for Rich Results | Value | Example |

|---|---|---|---|

| Yes | Product name | "Asics Gel-Kayano 30" |

| Yes | URL(s) of product image(s) | Array of image URLs |

| Recommended | Product description | Plain text or HTML |

| Recommended | Stock keeping unit | "AGK30-BLU-8" |

| Recommended | Brand name | "Asics" |

| Yes (for price display) | Offer object | See below |

| Recommended | Rating + count | 4.5 out of 5, 234 reviews |

| Optional | Individual reviews | Structured review objects |

The offers property is where most builds break. Google requires priceCurrency, price, and availability inside the Offer object to show pricing in rich results, and availability has to use the schema.org vocabulary: https://schema.org/InStock, https://schema.org/OutOfStock, or https://schema.org/PreOrder. If you ship Product schema without Offer, you still get structured data credit, but you miss the rich result enhancement that drives the CTR lift.

For stores with product variants, you need to pick an implementation approach and stick to it. Google's documentation supports both a single Product schema with multiple Offer objects (one per variant) and separate schema per variant page. Our practical recommendation stays simple: if variant pages are canonicalised to the parent product page, put a single Product schema on the canonical and include multiple Offer objects. If variant pages are independently indexed (we advise against that for near-identical variants), each page needs its own Product schema.

Review schema needs extra care. Aggregate ratings in SERPs - the gold stars that push CTR - require aggregateRating with both ratingValue and reviewCount. But Google's rich results policies ban self-serving reviews, so the reviews must be real customer feedback, not editorial ratings the store adds. Fake or inflated ratings in schema can trigger manual actions from Google's quality team.

Platform choices change the work, not the requirement. Shopify's default themes include basic Product schema, but it's often incomplete - missing aggregateRating, using the wrong availability values, or leaving out sku. WooCommerce usually needs a plugin such as Yoast SEO, Rank Math, or Schema Pro for complete Product schema. Magento 2 includes native schema support, but it often outputs malformed JSON-LD that fails Google's Rich Results Test.

Testing and monitoring structured data isn't optional. Use Google's Rich Results Test to validate a representative sample of product pages, then watch the Rich Results report in Google Search Console for errors and warnings. One common failure: price values that don't match the visible page price. That mismatch triggers manual review and can suppress rich results across the entire site.

Beyond Product schema, ecommerce sites should implement:

- BreadcrumbList schema on all category and product pages - generates breadcrumb display in SERPs and reinforces site architecture signals

- SiteLinksSearchBox schema on the homepage - enables a search box directly in Google's SERP for branded queries

- FAQPage schema on product pages with FAQ sections - generates expandable FAQ rich results that increase SERP real estate

Mobile-First Indexing for Ecommerce: What It Means When Most of Your Traffic Is on a Phone

Google uses the mobile version of your site as the primary basis for indexing and ranking. This has been the default for all new sites since 2019 and became universal in 2023. For ecommerce stores where mobile traffic routinely accounts for 60-70% of sessions, mobile-first indexing isn't a future consideration - it's the current reality your rankings already reflect.

That reality shows up fast on product pages. If your mobile product pages drop content that exists on desktop - truncated descriptions, hidden specifications, omitted reviews - Google's index reflects the mobile version. That missing content won't support rankings. A store that shows full product specifications in a desktop tab but hides them behind a collapsed accordion on mobile hides those specifications from Google's index.

Content parity between mobile and desktop is non-negotiable. Every piece of content that drives relevance signals - product descriptions, specifications, review text, category copy - needs to be accessible and crawlable on the mobile version of the page. Content tucked behind JavaScript interactions on mobile, like collapsed tabs or "read more" truncation, needs proof. Verify crawlability in Google Search Console's URL Inspection tool with mobile rendering selected. Knowing how to use Google Search Console effectively makes this verification step significantly faster across large catalogs.

Mobile performance also decides rankings because mobile network conditions vary more than desktop connections. A product page that loads in 2.1 seconds on a fast desktop connection may load in 4.8 seconds on a mid-range Android device on a 4G connection. Core Web Vitals measurements in Google Search Console come from field data, pulled from real users. That field data reflects mobile conditions, not lab results.

Field data exposes the same set of mobile breakpoints again and again. Common mobile-specific technical issues on ecommerce stores:

- Unplayable video content: Autoplay videos that work on desktop can fail on mobile due to browser restrictions. Swap in poster images, or use HTML5 video with the right mobile attributes.

- Touch target sizing: Buttons and links that are too small for finger taps hurt UX. They also drag down INP on mobile.

- Intrusive interstitials: Pop-ups that cover the main content on mobile - including cookie consent banners that take up the full screen - can trigger Google's intrusive interstitials penalty.

- Viewport configuration: Missing or incorrect viewport meta tags cause desktop-scaled rendering on mobile, which Google detects and penalises in mobile-first indexing.

Mobile-first indexing means the mobile crawl isn't optional. The mobile-first audit should sit inside every ecommerce technical SEO plan. Run a dedicated mobile crawl in Screaming Frog using a Googlebot Smartphone user agent, then compare the crawl output against the desktop crawl to spot content, internal link, or status-code mismatches between the two versions.

Duplicate Content in Ecommerce: The Hidden Rankings Killer Across Product Variants and Categories

Duplicate content doesn't trigger a penalty in the traditional sense, but it forces Google to choose which version of a page to rank - and Google's choice is often the one you wouldn't pick. Across a large ecommerce catalog, unmanaged duplication dilutes ranking signals, splits link equity, and clutters the crawl profile, which lowers indexation efficiency.

The crawl profile gets messy for the same reasons on most stores. The sources of duplicate content in ecommerce are specific and predictable:

Product variants: A t-shirt available in six colours, each with its own URL, creates six pages with near-identical content - same Title tag, same description, same specifications, different colour name and image. Without canonical tags, Google indexes all six and struggles to decide which page is the primary version.

Category pagination: /category/running-shoes/ and /category/running-shoes/?page=2 share the same category description, navigation, and metadata. Page 2 ends up as a near-duplicate of page 1 for most signals.

Filtered category pages: As covered in the URL structure section, filter combinations generate duplicate or near-duplicate category pages at scale.

Manufacturer descriptions: Stores that use manufacturer-supplied product descriptions publish the same copy as every other retailer selling the same product. Google reads that as duplication and may rank the manufacturer's own site, or a bigger retailer with stronger authority, ahead of you.

WWW vs. non-WWW and HTTP vs. HTTPS: If both http://www.example.com/product/ and https://example.com/product/ return 200 responses, they're duplicate pages. Fix this at the server level with redirects, then back it up with a sitewide canonical approach.

A canonical approach is the control point. Moz's guide to duplicate content in ecommerce explains how canonical tags and redirect strategies work together to consolidate ranking signals across variant and filtered pages. The canonical tag (<link rel="canonical" href="...">) tells Google which version of a page is the primary version and should collect the ranking signals. For ecommerce, the canonical strategy should be:

- Product variant URLs should canonicalise to the primary variant, usually the default colour/size combination.

- Filtered category URLs should canonicalise to the base category URL.

- Paginated category pages should canonicalise to the first page, with appropriate handling for paginated content.

- WWW and non-WWW versions should redirect to the chosen primary domain.

The manufacturer description problem requires a content investment. The only sustainable solution is unique product descriptions written specifically for your store. For a catalog of thousands of products, this needs a systematic content production process, not a one-time project. Start with the highest-revenue products, then work down the catalog by commercial value. Even a 100-word unique introduction above a manufacturer description creates enough differentiation to reduce duplicate content signals. Pairing that content investment with curated links to your top category pages accelerates the authority gains that make unique content worth writing.

Platform-Specific Technical SEO: Shopify, WooCommerce, and Magento Compared

The ecommerce platform your store runs on sets the default technical SEO issues you'll inherit and the fixes you can ship without custom work. An ecommerce SEO consultant advising a migration needs these tradeoffs clear, because they're rarely symmetric. An in-house SEO manager also needs to separate platform constraints from day-to-day implementation choices, otherwise the backlog turns into noise.

Feature | Shopify | WooCommerce | Magento 2 |

|---|---|---|---|

URL structure control | Limited - /products/ and /collections/ forced | Full control | Full control |

XML sitemap | Auto-generated, limited customisation | Plugin-dependent | Native, configurable |

Canonical tags | Auto-generated (sometimes incorrectly) | Plugin-dependent | Native |

Schema markup | Basic Product schema in themes | Plugin-dependent | Native but often malformed |

Page speed | Good (Shopify CDN) | Variable (hosting-dependent) | Variable (server config-dependent) |

Faceted navigation control | Limited native tools | Plugin-dependent | Native layered navigation controls |

Robots.txt editing | Editable since 2021 | Full access | Full access |

JavaScript rendering | Liquid templates (fast) | PHP with JS plugins (variable) | PHP with JS (complex) |

Shopify's known SEO limitations are well-documented, but teams still misread what is and isn't changeable. The forced /products/ and /collections/ URL structure is a hard constraint - those prefixes don't move without custom development, and even then Shopify's redirect handling often complicates a clean migration. Shopify also creates duplicate URLs for products that sit in multiple collections: /collections/running-shoes/products/asics-gel-kayano-30/ and /products/asics-gel-kayano-30/ both resolve for the same product. Shopify papers over that with canonicals pointing to the /products/ URL. The duplicate crawl surface still exists.

That crawl surface matters.

Shopify earns its keep on performance. CDN-delivered assets and automatic WebP conversion remove a chunk of the usual tuning work. For most small to mid-market stores, Shopify's default performance beats a poorly configured WooCommerce build on shared hosting, and it does it without a dev sprint.

WooCommerce offers the most flexibility and it punishes teams that treat flexibility like a free upgrade. Full URL control lets you build an ecommerce URL structure that matches how users shop and how Google crawls, but it also lets you ship a mess in a single afternoon. Tag pages, attribute pages, and custom taxonomy pages all spit out indexable URLs by default - and for most stores, those pages don't belong in the index. WooCommerce's default robots.txt blocks none of them, and the default sitemap includes them all. Fixing that takes deliberate rules and testing, not a plugin install and a checkbox.

Those rules also need to hold under scale, which is where platform choices start to bite.

Magento 2 is the most powerful and the most technically demanding. Its layered navigation can generate a long tail of parameter URL variants that burns crawl budget fast, especially on large catalogs. The upside: Magento gives you native controls over which attributes generate indexable URLs, so you can contain the damage if the implementation is disciplined. Performance hinges on caching. Magento's full-page cache isn't optional - without it, product pages slow down and the problem gets worse under load. The complexity is the point, and it also means Magento technical SEO usually needs specialist implementation rather than plugin-style fixes.

Specialist implementation doesn't mean "no quick wins." Standard platform analysis points to easy improvements that don't need developer time: Shopify theme-level schema upgrades, WooCommerce Yoast SEO settings for tag page noindexing, and Magento's built-in URL rewrite management. Start there. Custom development belongs after the basics stop moving the needle. Running a technical SEO audit before committing to platform-specific fixes helps you separate genuine platform constraints from implementation choices that can be corrected without a migration.

Technical SEO Audit Checklist: A Prioritised Action Plan for Ecommerce Sites

A technical SEO audit for an ecommerce store isn't a one-time event - it's recurring hygiene. Large catalogs need a quarterly cadence. Stores with high product turnover need monthly runs, because indexation and crawl waste creep back in as inventory changes. The checklist below is prioritised by impact, not alphabetically.

Priority 1: Crawl and Indexation (Highest Impact)

- [ ] Verify all revenue-generating pages (categories, subcategories, products) are indexed in Google Search Console

- [ ] Confirm XML sitemap contains only indexable, canonical, 200-status URLs

- [ ] Audit robots.txt to block cart, checkout, account, wishlist, and internal search pages

- [ ] Identify and resolve crawl budget waste from parameter URLs and filter combinations

- [ ] Check for orphan pages (indexed but not internally linked)

- [ ] Verify canonical tags on all product variant and filtered category pages

Priority 2: Duplicate Content

- [ ] Audit product variant pages for canonical tag implementation

- [ ] Identify products using manufacturer descriptions and schedule unique content production

- [ ] Verify WWW/non-WWW and HTTP/HTTPS redirects are correctly implemented sitewide

- [ ] Check category pagination for correct canonical handling

Priority 3: Page Speed and Core Web Vitals

- [ ] Run Core Web Vitals report in Google Search Console - identify failing page groups

- [ ] Audit LCP element on product page template - verify hero image is preloaded and served in WebP

- [ ] Check CLS for dynamic content elements (banners, consent bars, pricing)

- [ ] Audit INP for interactive product page elements (size selectors, add-to-cart, galleries)

- [ ] Verify lazy loading is applied to secondary images only, not hero images

Priority 4: Structured Data

- [ ] Validate Product schema on a sample of 10 product pages using Google's Rich Results Test

- [ ] Verify Offer object includes

price,priceCurrency, andavailability - [ ] Check Rich Results report in Google Search Console for schema errors and warnings

- [ ] Implement BreadcrumbList schema on category and product pages if not present

- [ ] Audit

aggregateRatingimplementation for review count accuracy

Priority 5: Mobile and UX

- [ ] Run mobile crawl with Googlebot Smartphone user agent - compare against desktop crawl

- [ ] Verify content parity between mobile and desktop product pages

- [ ] Check for intrusive interstitials on mobile

- [ ] Audit touch target sizing on product page interactive elements

Priority 6: Product Page Lifecycle

- [ ] Identify out-of-stock pages - verify they remain indexed with availability messaging

- [ ] Identify discontinued pages with backlinks - implement 301 redirects to relevant alternatives

- [ ] Audit redirect chains - ensure no chain exceeds two hops

This checklist is the backbone of a working ecommerce SEO plan. Run it quarterly, track findings in a shared audit doc, and assign a named owner per priority area. The gap between ecommerce stores that grow organic revenue and those that plateau rarely comes down to content quality or link volume - it comes down to whether the team maintains technical basics across thousands of URLs.

Technical debt changes the order of operations.

For stores that have carried technical debt for years, start with the first two categories and stay there until the site is under control. Crawl efficiency and indexation control drive the fastest measurable gains in organic visibility, and they set the base layer that every other optimization relies on. Once the technical foundation is solid, ecommerce link building becomes significantly more effective because the authority you earn actually flows to the pages that matter.

Frequently Asked Questions About Ecommerce Technical SEO

What is ecommerce technical SEO and how is it different from standard SEO?

Ecommerce technical SEO focuses on the technical setup of an online store so search engines can crawl, index, and rank the pages that drive sales. Scale changes everything. A content site might have a few hundred URLs; ecommerce stores can have tens of thousands once variants, internal search, and filters spin out new paths.

That scale creates problems standard SEO rarely deals with. Product variants generate duplicates, faceted navigation burns crawl budget, and product pages change state all the time - out of stock, back in stock, then discontinued. And the stakes are higher. Technical mistakes hit revenue pages first, not just blog posts.

How do I manage crawl budget for a large ecommerce catalog?

Start with robots.txt and cut off the junk. Block low-value URLs like internal search results, cart, checkout, account, and wishlist pages so Googlebot doesn't waste time crawling pages that won't rank.

Keep that same discipline in your XML sitemap. It should list only indexable, canonical URLs - nothing blocked, nothing noindexed, nothing parameterized. Then pull Google Search Console's Crawl Stats report and look for patterns in what's getting hit: parameter-driven duplicates, filter combinations, and other URL types that soak up crawl time.

Crawl time is the metric that matters here. Push it toward product and category pages by using canonical tags where you need consolidation, and robots.txt disallows where parameters create endless duplicates. Filter variations and system pages shouldn't be the main crawl destination.

Should I noindex out-of-stock product pages?

No - not for temporarily out-of-stock products. Keep the page indexed, show availability clearly, and add related product suggestions so the page still helps users and still earns revenue when stock returns.

Ranking history matters. Noindex wipes that out, and when inventory comes back you're rebuilding from scratch. For permanently discontinued products that have backlinks, use a 301 redirect to the closest relevant alternative instead of serving a 404 or slapping on a noindex.

Backlinks set the rule. Only use noindex or a 404 for discontinued pages with no backlinks, no ranking history, and no organic traffic.

How do I fix duplicate content caused by faceted navigation filters?

Use a mix of canonical tags and selective robots.txt blocking. That combo holds up in real ecommerce builds because it lets you consolidate what doesn't deserve its own indexable URL while still keeping control of crawl paths.

Canonical tags do the heavy lifting for consolidation. Canonicalise filter-generated URLs back to the base category page for combinations that don't map to real search demand. Robots.txt handles the pure noise - block sorting and display parameters like ?sort=price and ?view=list so they don't turn into crawl traps.

Some filters deserve to rank. For combinations that match real queries - like /running-shoes/womens/waterproof/ - make them indexable and add unique content so they stand on their own. But check inbound links before you block anything, because blocking linked pages cuts off the link equity tied to those URLs.

What structured data schema should I implement on product pages?

Product schema comes first, and the Offer object needs to be complete. Include price, priceCurrency, and availability using schema.org vocabulary so the page qualifies for pricing rich results.

Reviews are the next layer. Add aggregateRating only when the data reflects real customer reviews, since that's what enables star ratings in SERPs. Then add BreadcrumbList schema across product and category pages to reinforce site structure.

Validation is part of the work, not an afterthought. Run Google's Rich Results Test and track the Rich Results report in Google Search Console so errors don't sit in production. Shopify themes usually ship with basic Product schema, but it tends to be incomplete, so audit it against Google's Product structured data documentation.

related Blog Posts

Join 2,600+ Businesses Growing with Rhino Rank

Sign Up

Stay ahead of the SEO curve

Get the latest link building strategies, SEO tips and industry insights delivered straight to your inbox.

Back to all posts

Back to all posts