Most SEO managers have run a Broadband Speed Test, seen a decent number, and moved on. That's the problem. A 200 Mbps download reading from Ookla tells you nothing about why your client's LCP is sitting at 4.2 seconds. A green score in PageSpeed Insights doesn't guarantee your real users are having a fast experience. And a single test run from one location, at one moment in time, is almost always misleading. Speed testing is a diagnostic discipline - treating it like a one-click health check is one of the most common technical SEO mistakes we see agencies make.

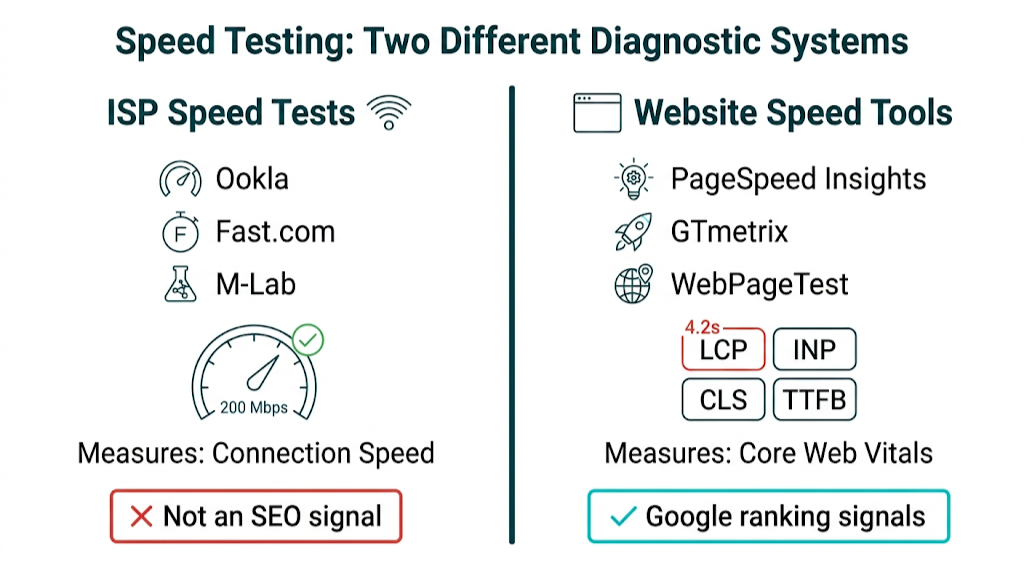

The bottom line: There is no single "best speed test site" for SEO professionals - there are the right tools for the right diagnostic questions. ISP speed tests (Ookla, Fast.com, M-Lab) measure your broadband connection. Website speed tools (PageSpeed Insights, GTmetrix, WebPageTest) measure how your pages perform for users and search engines. Confusing the two leads to bad technical decisions. This guide maps every major tool to the specific SEO problem it solves, explains the critical difference between lab data and real-user field data, and shows you how to turn speed test results into concrete Core Web Vitals fixes.

That gap between "a score" and "real users" is getting more expensive. Google's Page Experience signals are baked into ranking algorithms, Core Web Vitals are now a confirmed ranking factor, and mobile-first indexing means your site is judged by its mobile performance first. Getting your speed testing methodology wrong doesn't just waste time - it means you're optimizing for the wrong signals.

Why Your Speed Test Results Are Probably Wrong (And What to Do About It)

Here's a scenario that plays out constantly in agency workflows. A client reports their site feels slow. You run Ookla from your office, get 350 Mbps down, and conclude the connection isn't the issue. You run PageSpeed Insights, see a performance score of 72, and note a few image compression warnings. You send a report. The client's bounce rate doesn't improve. Rankings stay flat. The diagnosis failed.

Almost everything, diagnostically speaking.

The core misconception is this: a fast broadband connection and a good website performance score are measuring completely different things. Your 350 Mbps office connection has no bearing on what a user on a mid-range Android device with a 4G connection in Birmingham experiences when they load your client's page. And that PageSpeed Insights score of 72? That's lab data - a synthetic measurement run in a controlled environment, not a reflection of what real users see in the wild. As industry guides on website speed testing tools note, single scores mislead without contextual interpretation. That warning belongs above every SEO manager's monitor.

Those single scores mislead in three repeatable ways if you don't control the methodology.

First, test location bias. Most speed tests default to the nearest server. If your client's target audience is in Manchester but you're running tests from London, you're measuring a different network path. A site hosted in the US will look much faster from a London test server than it will to a real user in Edinburgh or Dublin.

Second, point-in-time variance. Network conditions fluctuate. A test run at 2pm on a Tuesday won't match one run at 8pm on a Friday. A single reading is a data point, not a trend. Run the same test five times, then average the results. Trust that, not the first number you see.

Third, the loaded vs. unloaded latency problem. Most ISP speed tests measure latency on an idle connection. Real users hit latency while the connection is already under load - streaming, downloading, or handling multiple simultaneous requests. This is where bufferbloat shows up, and it's a factor almost no SEO guide covers. We cover this in detail in the Waveform section below.

The diagnostic sequence is the fix. Different tools answer different questions. The rest of this guide tells you exactly which tool to reach for, and when.

What Google Actually Cares About: Speed Metrics That Affect SEO Rankings

Before you pick a speed test tool, get clear on what you're measuring for SEO. Google doesn't rank pages based on your ISP download speed. It ranks pages partly based on Core Web Vitals - user experience metrics that reflect how fast, stable, and responsive a page feels for real users.

The current Core Web Vitals set for 2026 has three primary metrics:

- LCP (Largest Contentful Paint): Time for the largest visible element to render (often a hero image or headline). Google's "Good" threshold is under 2.5 seconds.

- INP (Interaction to Next Paint): Time to respond to user input like clicks or taps. This replaced FID (First Input Delay) in March 2024. A "Good" INP score is under 200 milliseconds.

- CLS (Cumulative Layout Shift): Amount of unexpected layout movement during load. A "Good" CLS score is under 0.1.

There's also a fourth metric that sits outside the Core Web Vitals set but feeds straight into LCP: TTFB (Time to First Byte). TTFB measures the gap between the browser making an HTTP request and receiving the first byte from your server. Google's own TTFB optimisation guide calls "Good" TTFB under 800ms. Miss that, and LCP usually follows, because the browser can't render what it hasn't received.

That brings us to the field data vs lab data split. This is the piece most speed test roundups either gloss over or get wrong, and it matters because it changes what you trust when you're talking rankings. For a broader look at the key SEO metrics to track, this distinction between lab and field data applies across the board.

Data Type | Source | Reflects Real Users? | Used in Google Rankings? |

|---|---|---|---|

Lab data | Lighthouse, GTmetrix, WebPageTest | No - synthetic test | Indirectly (diagnosis) |

Field data (CrUX) | Chrome User Experience Report | Yes - real user sessions | Yes - directly |

Lab data comes from a simulated page load in a controlled setup. It's repeatable. It's detailed. And it's great for diagnosing what's slowing a page down.

Field data comes from the Chrome User Experience Report (CrUX) - real page loads from real Chrome users, aggregated over a 28-day rolling window. When Google's ranking systems evaluate your Core Web Vitals, they use CrUX field data, not a Lighthouse lab score. So yes, a page can hit 95 in PageSpeed Insights' lab test and still post weak field data if real users (on slower devices, weaker connections, or both) see something else.

That "real users" point lines up with Google's crawl behavior, too. Google's mobile-first indexing documentation spells it out: Google crawls and evaluates the mobile version first. If you're benchmarking with desktop emulation, you're not measuring what Google is using for the primary evaluation path.

Here's the operating rule for SEO managers: use lab tools to find and fix issues, then validate progress in field data via PageSpeed Insights. Lab scores help you debug. Field data tells you what Google can actually use as a ranking signal. You need both, and you can't substitute one for the other.

The 8 Best Speed Test Sites Ranked for SEO Professionals in 2026

The tools below are ranked by SEO utility, not consumer convenience. We split them by primary function - some test your connection, some test your website, and one measures something most SEO teams still ignore. Each tool is here because it answers a specific diagnostic question that ties back to rankings or user experience. Use the table for orientation, then map each tool to the problem you're trying to isolate.

Tool | Type | Primary SEO Use | Field Data? | Free? |

|---|---|---|---|---|

Google PageSpeed Insights | Website | Core Web Vitals + CrUX field data | Yes | Yes |

Cloudflare Speed Test | ISP + Network | Latency, jitter, responsiveness | No | Yes |

GTmetrix | Website | Waterfall analysis, resource diagnosis | No | Freemium |

Ookla Speedtest | ISP | Baseline connection speed | No | Yes |

WebPageTest | Website | Advanced audits, multi-location | No | Yes |

Fast.com | ISP | Quick, clean ISP check | No | Yes |

M-Lab NDT | ISP | Open, auditable connection data | No | Yes |

Waveform Bufferbloat Test | Network | Loaded latency, bufferbloat grade | No | Yes |

1. Google PageSpeed Insights - Best for Core Web Vitals & SEO Diagnosis

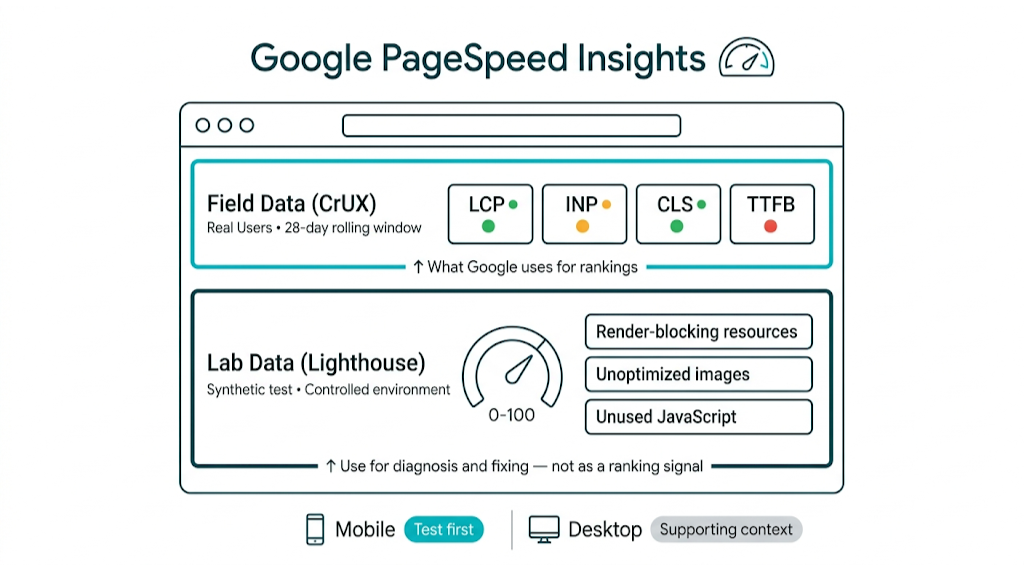

PageSpeed Insights (PSI) is the baseline for any SEO-driven speed audit. It's the only public tool that shows Lighthouse lab data and CrUX field data in the same UI - which helps us separate "fails a synthetic test" from "real users feel it."

The field data block at the top of PSI shows your LCP, INP, CLS, and TTFB based on real Chrome sessions from the last 28 days. If your site doesn't have enough traffic to populate CrUX, PSI calls that out. That gap matters, because it means Google may not have enough field data to apply a Core Web Vitals signal to the page.

CrUX gives you reality. Lighthouse gives you a controlled benchmark.

Below the field data, PSI runs a Lighthouse audit and outputs the lab score from 0-100 that everyone obsesses over. Use it, but keep it in its lane. The Opportunities and Diagnostics sections are the real work: they point to specific issues like render-blocking resources, unoptimized images, unused JavaScript, and slow server response. Each item links to Google's documentation so our devs can move fast without guessing.

PSI also splits testing by mobile and desktop. Run mobile first. Google uses mobile-first indexing, and a mid-market SaaS team spending $3k/month on technical SEO while ignoring the mobile PSI report is tuning for a crawl setup that no longer matters. If you want a structured approach to catching these issues systematically, our technical SEO checklist covers the full audit sequence.

Best used for: Checking Core Web Vitals field data, identifying specific LCP/INP/CLS issues, confirming fixes after implementation.

2. Cloudflare Speed Test - Best for Latency, Jitter & Responsiveness Data

Cloudflare's speed test (speed.cloudflare.com) belongs in an SEO manager's toolkit, even if most teams associate Cloudflare with CDN and DNS work instead of diagnostics. That assumption leads to missed signals.

The signal you get here is latency under load. Cloudflare reports unloaded and loaded latency alongside download and upload numbers. It also reports jitter, the variation in latency between packets. Jitter shows up as inconsistency. Users feel that inconsistency as random slow loads, even when averages look fine.

That jitter and loaded-latency split changes how we troubleshoot. Industry speed test comparisons rate Cloudflare highly for latency and jitter reporting, and call out that it measures responsiveness as a standalone metric. For SEO managers chasing TTFB issues, knowing whether you're dealing with steady high latency or spiky jitter changes the fix - a CDN helps with consistent latency, while jitter usually points to network congestion or ISP routing.

Diagnostics also need to be frictionless. Cloudflare's test is ad-free and doesn't require an account, which helps when we're running checks across multiple client URLs in one session.

Best used for: Diagnosing latency and jitter issues, understanding connection responsiveness, supplementing ISP baseline data with more granular network metrics.

3. GTmetrix - Best for Waterfall Analysis & Identifying Slow Resources

GTmetrix fills one role better than anything else in performance audits: the waterfall chart. PageSpeed Insights tells you what's slow. GTmetrix shows you when each request fires, how long it takes, what blocks it, and what it blocks in return. When LCP drifts because of one file, one script, or one third-party request, the waterfall makes the culprit obvious.

The free tier runs Lighthouse-based scoring and offers a limited set of test locations. Paid tiers open up more locations, page-load video (useful for tracking CLS), and scheduled monitoring. Monitoring matters for agencies, because it turns one-off testing into a baseline we can defend month over month.

Third-party load is where GTmetrix earns its keep. It surfaces third-party impact analysis clearly, so you can see how much time comes from external scripts like analytics, chat widgets, and ad networks. A page stuck at 68 because a third-party tag manager adds 1.2 seconds is a different fix than a page stuck at 68 because the hero image ships uncompressed. GTmetrix makes that difference hard to miss.

One caveat stays true: GTmetrix is lab data only. No CrUX. Use it for diagnosis and validation after changes, not as a stand-in for a Google ranking signal.

Best used for: Waterfall analysis, third-party script impact, CLS video diagnosis, post-fix validation.

4. Ookla Speedtest - Best Widely-Recognized Baseline for ISP Connection Speed

Ookla Speedtest is the most widely recognized speed test tool in the world. That recognition helps and hurts. When a client says, "I ran a speed test and got 500 Mbps," they almost always used Ookla. We need the context behind that number - and the gaps in it - before we tie anything back to SEO.

Ookla measures download speed, upload speed, and ping, meaning latency, against the nearest available server. The test runs fast and the output is easy to repeat in a client deck. The mobile apps hold up, and historical results - available with a free account - let us track connection performance over time.

That baseline is where the debate starts. Technical communities, including a well-discussed thread on the r/homelab subreddit on most accurate internet speed tests, argue that Ookla can overstate results by favouring test traffic compared to normal browsing. The methodology uses parallel connections to saturate bandwidth, which often reads higher than what users see in real sessions. For an ISP baseline, that tradeoff works. For diagnosing why a specific page loads slowly, it doesn't map to the problem.

Page-load diagnosis needs geography too, and geography is where Ookla earns its keep. It has a global server network large enough to test from locations that line up with your audience. If a client's users sit in Australia and the site is hosted in the US, an Ookla run from an Australian server gives us a realistic latency baseline.

Best used for: Client-facing ISP baseline reports, geographic latency testing, confirming ISP-level issues before escalating to hosting/CDN diagnosis.

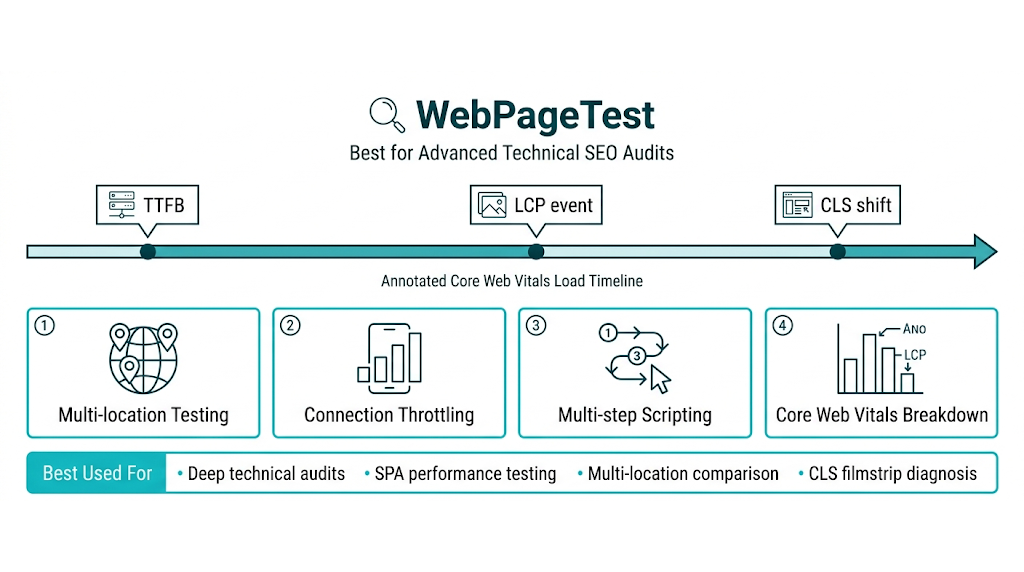

5. WebPageTest - Best for Advanced Technical SEO Audits

WebPageTest is where serious technical SEO audits live. It's free, open-source, and it exposes the kind of diagnostic detail most public tools don't. The UI isn't as polished as GTmetrix. The data is deeper.

That depth shows up in the features we use in audits: multi-step transaction testing for pages behind login flows, filmstrip view for a frame-by-frame timeline of render, connection throttling to simulate specific device and network conditions, and the ability to run tests from dozens of locations worldwide. The M-Lab NDT methodology informs some of the network measurement approaches WebPageTest uses for connection simulation.

The same depth carries into its Core Web Vitals breakdown. WebPageTest annotates the load timeline so we can see exactly when LCP, CLS, and INP events fire. That makes it a strong tool for catching the moment a layout shift happens, or for isolating the element that triggers the LCP event.

Agencies get a lot of mileage out of scripting. We can write test scripts that mimic real user behaviour - clicking a button, submitting a form, navigating to a second page - then measure performance across the whole sequence. SPAs need this. Without it, navigation performance goes untested.

Best used for: Deep technical audits, SPA performance testing, multi-location comparison, filmstrip analysis for CLS diagnosis.

6. Fast.com - Best for Quick, Ad-Free ISP Speed Checks

Fast.com is Netflix's speed test, and it acts like it. It loads fast, runs a download test on its own, and shows one big number. No ads. No upsells. No account.

That one-number readout is the point for SEO managers doing triage. If we need a quick ISP sanity check - confirm the client's connection works before we start blaming the site - Fast.com keeps the process simple. The "More info" button adds upload speed and latency, which covers a basic connection health check.

Recent speed test comparisons call out Fast.com's clean, ad-free experience as a standout. It tests on Netflix's own CDN infrastructure, which lines up well with the download speeds that matter for streaming and content-heavy sites.

Fast.com won't tell us anything about a site's performance. But for ruling out a connection problem in under 30 seconds, it's the best fit for that job.

Best used for: Quick ISP connection checks, ruling out connection issues before website-level diagnosis, client-side troubleshooting.

7. M-Lab NDT - Best for Open, Auditable Speed Data

The M-Lab NDT (Network Diagnostic Tool) is the speed test academic researchers, network engineers, and policy advocates use when they need numbers they can verify. M-Lab is a non-profit research consortium, and its NDT methodology is fully open-source and publicly documented.

Most SEO managers won't run M-Lab every day. That isn't the point. It fills one job: producing speed data that doesn't carry commercial bias. Ookla is owned by Ziff Davis. Fast.com is owned by Netflix. Both have business interests that could, at least in theory, shape how results get presented. M-Lab doesn't have that conflict, and the paper trail is there for anyone to audit.

NDT measures download speed, upload speed, and latency using a single TCP connection - a more conservative approach than Ookla's parallel-connection testing. It also tends to mirror what a lot of real sessions look like, where one connection gets most of the work. The results land in M-Lab's open dataset, so you can query historical data and compare performance across time and geography without guessing what changed.

That audit trail matters. For agencies working with clients in regulated industries, or for performance reporting that needs to hold up under scrutiny, M-Lab's methodology gives you something you can cite and defend. Teams looking for a fully managed approach to technical performance and link building can explore our managed service to see how we handle this at scale.

Best used for: Auditable, bias-free ISP speed data, research-grade network measurement, long-term connection performance tracking.

8. Waveform Bufferbloat Test - Best for Diagnosing Latency Under Load

This tool rarely shows up in competing SEO guides - and that's a blind spot.

Bufferbloat is a network condition where excessive buffering in routers and other network gear drives up latency and jitter during high bandwidth usage. In plain terms: a connection can test at 300 Mbps when it's idle, then feel slow the moment multiple things run at once. The buffer fills, packets queue, and responsiveness drops even though "speed" looks fine.

Waveform's Bufferbloat Test (available at waveform.com/tools/bufferbloat) measures latency while it runs download and upload load at the same time. It grades bufferbloat from A to F. Network performance comparisons recommend Waveform for this, calling it the most accessible option for diagnosing bufferbloat on consumer and small business connections.

That grade has real SEO implications. Sites with real-time features - live chat, video playback, dynamic content loading, interactive tools - take the hit first on connections with poor bufferbloat, even when a standard speed test reports high throughput. Users feel lag. Engagement metrics slide. And the experience signals Google uses in quality assessment move in the wrong direction.

Best used for: Diagnosing poor perceived performance on nominally fast connections, auditing sites with real-time or interactive features, identifying ISP-level issues that standard speed tests miss.

ISP Speed Tests vs Website Speed Tests: Which One Do SEO Managers Actually Need?

The answer is both - but they solve different diagnostic problems. Most SEO guides frame these as alternatives, then leave teams to stitch the workflow together. Competitor articles we've analysed usually cover either ISP speed tests or website speed tools. None ties both to specific SEO scenarios. This section does that.

ISP speed tests (Ookla, Fast.com, M-Lab, Cloudflare) measure connection capacity and network health. They answer practical questions: Is the client's broadband fast enough? Is the office network carrying high latency? Is the connection stable or jittery? Those checks matter when you're trying to rule out connection-level issues before you spend time on servers, CDNs, or front-end work.

Website speed tests (PageSpeed Insights, GTmetrix, WebPageTest) measure how a specific URL performs in a browser. They surface the issues that map to Core Web Vitals and ranking signals: What is this page's LCP? Which resources block rendering? Is a font loading late and causing layout shift? This is page execution, not just raw bandwidth.

Here's the diagnostic decision framework:

Symptom | Start With | Then Use |

|---|---|---|

Client reports site "feels slow" | Cloudflare Speed Test (check latency/jitter) | PageSpeed Insights (check field data) |

Poor LCP score in GSC | PageSpeed Insights (identify LCP element) | WebPageTest (filmstrip to pinpoint timing) |

High TTFB in PSI report | M-Lab NDT (rule out connection issues) | WebPageTest (server response timing) |

CLS issues in Core Web Vitals report | GTmetrix (waterfall + video playback) | WebPageTest (filmstrip view) |

Site feels slow despite good scores | Waveform Bufferbloat Test | Cloudflare Speed Test (loaded latency) |

Client ISP complaint | Ookla (baseline) + Fast.com (quick check) | M-Lab (auditable data for ISP dispute) |

This framework hinges on one shared metric: TTFB is the bridge metric between ISP-level and server-level performance. A high TTFB (above 800ms) points to one of three buckets: server processing time, weak hosting, or network latency between the user and the server. Google's TTFB optimisation guide breaks each cause down. Before you recommend a server upgrade or a CDN rollout, run an ISP-level test and confirm the network path isn't the bottleneck.

That bottleneck check still isn't the end of the story. ISP speed tests and lab-based website tests both produce synthetic data. The only tool that shows what real users experience - and what Google's ranking system evaluates - is PageSpeed Insights' field data section, which pulls from CrUX. Understanding how Google Search Console works alongside these tools gives you a more complete picture of how performance data feeds into your overall search visibility.

How to Run a Speed Test That Actually Gives You Useful SEO Data

Running a speed test correctly is a repeatable process, not a one-off action. Here's the process we recommend for SEO audits, built around the diagnostic framework above.

Step 1: Define the diagnostic question first.

Before you open any tool, write down what you're trying to find out. "The site is slow" isn't a diagnostic question. "The LCP on the homepage is above 2.5 seconds for mobile users in the UK" is. That question dictates the tool, the test location, and the device profile you simulate.

Step 2: Check field data before running any lab tests.

Open PageSpeed Insights and run the URL. Start with the field data section - ignore the lab score at first. If field data is available, you're looking at what real users see. Capture the LCP, INP, CLS, and TTFB values.

Those values drive your next step. If any land in the "Needs Improvement" or "Poor" range, you've got a confirmed issue. If field data isn't available because traffic is too low, call that out and move to lab data knowing you're working with estimates.

Step 3: Run the lab test from the right location.

Location changes results. For PageSpeed Insights and GTmetrix, the test location matters, and GTmetrix lets you pick the test server. Match it to your audience. A UK-focused site gets tested from a UK server, not a North American one.

WebPageTest gives you more control, so use it. Set both location and device profile, then run the Moto G4 (or a similar mid-range Android profile) to mirror the median mobile user. That lines up with how Google's Lighthouse runs mobile audits.

Step 4: Run multiple tests and average the results.

Scores move because network conditions move. Run the same test three to five times and use the median result, not the best or worst. One PageSpeed Insights run can swing 5-10 points based on server load at the time.

That swing is exactly why industry guidance on contextual interpretation matters - one score without repeat runs doesn't hold up as a data point.

Step 5: Test on the right connection profile.

Connection profiles also move scores. Lighthouse's default mobile test throttles the connection to simulate a mid-tier 4G connection: about 10 Mbps down with 40ms RTT. That's by design. It reflects the median mobile user, not a fibre broadband line.

Don't "fix" your score by testing unthrottled. That games the test instead of fixing the site.

Step 6: Document and track over time.

A single audit gives you a snapshot. Performance work needs a baseline and a trendline. GTmetrix's monitoring feature, Google Search Console's Core Web Vitals report, and WebPageTest's comparison feature let you track results across releases and over time. Pairing this with structured SEO reporting ensures your performance data gets communicated clearly to clients and stakeholders.

Baseline measurements come first. Set them before any significant site change - new theme, new plugin, new CDN configuration - so you can measure the impact with clean before-and-after data.

Step 7: Validate fixes with the same tool you used to diagnose.

Validation needs consistency. If PageSpeed Insights flagged an LCP issue and you've shipped a fix, validate in PageSpeed Insights. Don't diagnose in one tool and validate in another - the methods differ enough to produce false positives.

A fast test run without this process is just data collection. This process turns data into SEO work you can ship.

Speed Test Results to Core Web Vitals: How to Turn Data Into SEO Fixes

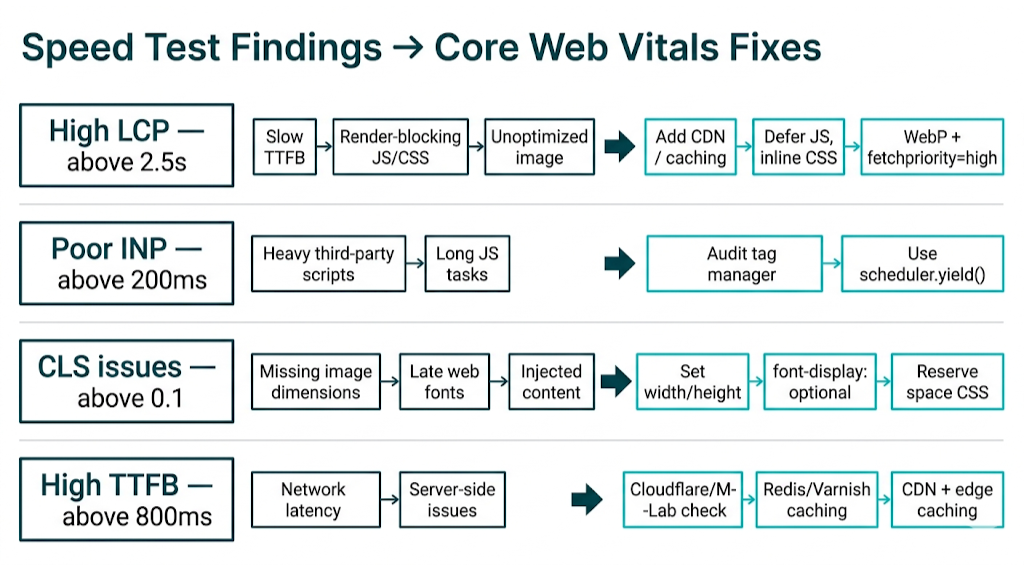

Collecting speed test data is the easy part. Knowing what to do with it is where most SEO managers stall. This section maps the most common speed test findings to specific, implementable Core Web Vitals fixes.

High LCP (above 2.5 seconds)

LCP is the Core Web Vital that fails most often, and the causes stay pretty consistent. When your PSI field data or GTmetrix waterfall shows a high LCP, work through these in order:

- Slow TTFB: If your server response time is above 800ms, LCP can't start until the server responds. Fixes include adding a CDN, upgrading hosting to a faster tier, implementing server-side caching, or moving to a closer server. Google's TTFB guide covers each of these in detail.

- Render-blocking resources: CSS and JavaScript files that load in the

<head>block rendering. Defer non-critical JS, inline critical CSS, and remove unused stylesheets. - Unoptimized LCP image: If the LCP element is an image (usually a hero banner), ensure it's served in WebP or AVIF format, sized correctly for the viewport, and includes a

fetchpriority="high"attribute. Lazy-loading the LCP image is a common mistake that hurts LCP.

Poor INP (above 200ms)

INP replaced FID in March 2024 and is harder to diagnose because it measures the worst interaction across an entire page session, not just the first input. High INP almost always points to JavaScript execution blocking the main thread.

- Start with third-party scripts. One heavy analytics or advertising script can monopolize the main thread and push INP into the "Needs Improvement" range.

- Use Chrome DevTools' Performance panel to find long tasks (tasks exceeding 50ms) that block interaction handling.

- Break up long JavaScript tasks using

scheduler.yield()or split code into smaller chunks.

CLS issues (above 0.1)

Layout shift usually comes from the same few culprits:

- Images and embeds without explicit width and height attributes - the browser can't reserve space before the element loads, so the layout shifts when it arrives.

- Late-loading web fonts that trigger text reflow when they replace the fallback font. Use

font-display: optionalor preload critical fonts. - Dynamically injected content (banners, cookie notices, ad slots) that pushes existing content down. Reserve space for these elements with CSS

min-height.

GTmetrix's video playback feature is the fastest way to see which element shifts and when it happens during the load sequence.

High TTFB (above 800ms)

As noted above, TTFB is the gateway metric. A high TTFB cascades into a high LCP. The diagnostic sequence is:

- Rule out network latency using Cloudflare Speed Test or M-Lab NDT.

- If network latency is normal, the issue is server-side: slow database queries, unoptimized application code, or inadequate hosting resources.

- Implement server-side caching (Redis, Varnish, or a full-page cache plugin for CMS platforms).

- If the site is globally distributed, implement a CDN with edge caching to reduce the physical distance between users and server responses.

The key principle across all of these fixes is that speed test data without a fix plan is just reporting. The value of running the right tools in the right sequence is that it points you to the intervention that will move the ranking signal - not just lift a score in a dashboard. Page speed is one of the technical factors Search Engine Journal identifies as directly influencing crawl efficiency and ranking potential - technical performance improvements work best when paired with a strong backlink building strategy - both are signals Google weighs when determining where your pages rank.

Frequently Asked Questions About Speed Test Sites and SEO

What is the most accurate internet speed test site in 2026?

For raw ISP connection speed, M-Lab NDT is the most methodologically rigorous pick. It uses an open-source, single-connection test, so the numbers run conservative but stay consistent and free of commercial incentives.

For a quick check that most teams recognize, Ookla is still the industry standard. But its parallel-connection method often reads a bit higher than what users see in real browsing.

For SEO work, Google PageSpeed Insights is the one that matters. It's the only tool that shows CrUX field data - the real user experience data Google uses in its ranking algorithm.

What is the difference between an ISP speed test and a website speed test?

An ISP speed test measures the capacity and condition of your broadband connection - download speed, upload speed, latency, and jitter. It won't tell you how any specific site behaves.

A website speed test measures how a URL loads in a browser. That includes which resources drag, what blocks render, and how the page performs against Core Web Vitals.

For SEO, website speed tests carry the weight. Connection tests come into play when you need to rule out local or network issues before you dig into hosting, server response, or page-level bottlenecks.

Does internet connection speed directly affect Google SEO rankings?

Not directly.

Google doesn't measure your server's internet connection speed. Google measures page performance for real users, pulled from Core Web Vitals field data in the Chrome User Experience Report - across devices and connection types.

A faster server connection still helps because it can reduce TTFB. Lower TTFB supports better LCP, and LCP is a ranking signal. The chain is simple: connection speed feeds page performance, and Core Web Vitals are what Google evaluates.

What is bufferbloat and why does it matter for website performance?

Bufferbloat shows up when network equipment buffers too much data during high-throughput periods, and latency spikes as a result - even on connections that look fast on paper.

Waveform's test makes the issue obvious. A connection that earns an "F" for bufferbloat might clock 200 Mbps download speed and still feel slow in normal browsing because packets sit in the router's queue instead of moving through.

This hits harder on sites with real-time UX - live chat, video, interactive tools. Users on high-bufferbloat connections get poor responsiveness even if your page loads quickly in a lab run. Waveform's Bufferbloat Test is the most accessible tool for diagnosing it.

What is a good website load time for SEO in 2026?

The better framing is Core Web Vitals, because Google doesn't rank on a single "load time" number.

For "Good" ratings, the thresholds are: LCP under 2.5 seconds, INP under 200 milliseconds, CLS under 0.1, and TTFB under 800ms. Google measures these on real user sessions via CrUX, not lab tests.

A fully loaded page time under 3 seconds remains a common mobile target. But for rankings, meeting the Core Web Vitals thresholds ties more directly to performance than any single aggregate load time metric.

The Ahrefs guide to Core Web Vitals and SEO reinforces this point: chasing a single aggregate load time number misses the granular signals Google actually uses to evaluate page experience.

related Blog Posts

Join 2,600+ Businesses Growing with Rhino Rank

Sign Up

Stay ahead of the SEO curve

Get the latest link building strategies, SEO tips and industry insights delivered straight to your inbox.

Back to all posts

Back to all posts