SaaS websites break the rules most technical SEO guides are built around. A typical B2B website might have 50 to 200 pages with a clean content hierarchy. A mid-stage SaaS company, though, often wrangles thousands of dynamically generated URLs, JavaScript-rendered product pages, login-gated app sections leaking into crawl paths, and auto-generated integration pages that multiply faster than the marketing team can track.

The technical SEO strategy that works for an ecommerce store or a local services site won't cut it here. Get it wrong and the fallout is ugly: according to an Ahrefs study, 90.63% of all pages get zero organic traffic from Google. If a SaaS team is burning cash on content production and link building, a broken technical foundation means most of that spend returns nothing.

Bottom line: SaaS technical SEO is the discipline of ensuring search engine bots can efficiently crawl, render, and index the pages that drive pipeline, while keeping low-value or duplicate URLs out of Google's index entirely. This guide covers the crawlability, indexation, site architecture, and performance problems that plague SaaS websites, with implementation examples your engineering team can use. We've written it for SEO managers, marketing directors, and agency owners who need more than a checklist. Every section includes real configurations, code snippets, or worked scenarios.

That technical foundation doesn't lift rankings in a vacuum. It decides how much ROI every backlink, every piece of content, and every dollar spent on organic growth can return. A page that can't be crawled returns zero value from link building. A page competing with three canonical duplicates splits authority instead of compounding it.

We see this pattern across the B2B SaaS companies we work with. Technical SEO sits underneath everything else. Fix it first, or you'll keep paying for growth that never compounds.

Why SaaS Technical SEO Is a Different Beast From Standard SEO

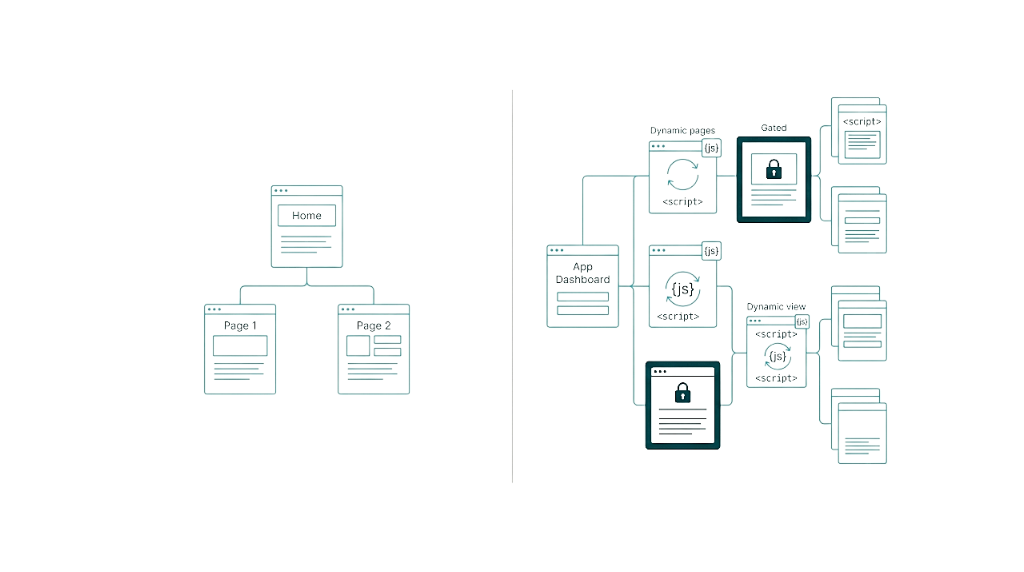

Most technical SEO guides assume a fairly static website. SaaS companies don't operate that way. The typical SaaS site is a living system: a marketing site, documentation hub, blog, help centre, and a web application, often on the same domain or split across subdomains. Each section creates its own SEO problems. Put them together and they stack.

That stack shows up fast in structure. Feature pages multiply as the product grows. Integration pages hit the hundreds if your product connects with other tools. Comparison pages (/vs/ URLs) target competitive keywords but run into thin-content issues when templated carelessly. Pricing pages change dynamically by user segment. And the logged-in product sits behind authentication, yet crawlable URLs still leak into Google's index through sloppy robots.txt rules or missing noindex directives.

The subdomain versus subfolder debate matters more here than in any other vertical. A SaaS company running its blog on blog.example.com, its docs on docs.example.com, and its marketing site on example.com splits domain authority three ways. Google treats subdomains as semi-separate entities. In practice, subfolders win for most teams because they keep link equity moving through one domain: example.com/blog and example.com/docs. We've seen B2B SaaS companies get measurable ranking improvements by migrating a blog from a subdomain to a subfolder, without adding new content or links.

That same consolidation problem shows up in rendering. Most modern SaaS products run on React, Angular, Vue, or similar frameworks. Those frameworks belong in the app, but they often bleed into the marketing site through shared component libraries or design systems. Then marketing pages rely on client-side JavaScript rendering for core content.

Google can render JavaScript, but it runs in two phases. That creates delays, burns crawl budget, and sometimes fails. Traditional businesses rarely hit this at scale. SaaS teams deal with it constantly.

These B2B SaaS SEO challenges don't stop at the technical layer. They shape how search engines read commercial intent in your URL structure, how they handle duplicate content created by pricing tiers and feature variants, and how they prioritise crawling across a site with ten times more URLs than a comparable non-SaaS business.

The SaaS Crawlability Problems That Kill Organic Growth Before It Starts

Crawlability is the baseline. If search engines can't access, parse, and follow links on your pages, nothing else matters. Not your content strategy. Not your link building. Not your on-page optimisation. SaaS sites also create crawl problems that don't show up on simpler, mostly static websites.

The first and most common issue is crawl budget waste. Google's crawl budget documentation breaks this into two parts: crawl rate limit (how fast Googlebot can crawl without hurting server performance) and crawl demand (how much Google wants to crawl based on perceived value). On a SaaS site with thousands of auto-generated integration pages, parameter-based URLs from filters or sorting, and app URLs leaking into the index, Googlebot burns requests on pages that won't rank. Every request spent on a /app/dashboard?user=12345 URL is a request not spent on your high-value /features/workflow-automation page.

Here's a practical scenario. A project management SaaS company has 150 integration pages, 80 feature pages, 200 blog posts, and 40 comparison pages. That's about 470 pages with real marketing value. But the site also exposes 3,000+ application URLs, 500 parameter variations from help center search, and 200 pagination URLs from the blog archive. Googlebot sees 4,170+ URLs competing for attention, and the pages that drive pipeline represent just 11% of the crawlable surface area.

The fix starts with robots.txt. Here's a configuration pattern we recommend for SaaS sites:

User-agent: *

Disallow: /app/

Disallow: /dashboard/

Disallow: /account/

Disallow: /api/

Disallow: /search?

Disallow: /*?sort=

Disallow: /*?filter=

Disallow: /*?ref=

Sitemap: https://www.example.com/sitemap-index.xmlThese rules block the application, user account sections, internal API endpoints, and parameter-based URL variations. But robots.txt only stops crawling. It doesn't prevent indexing.

Indexing is the part teams miss. If other sites link to your /app/ URLs (and they do), Google can still index those URLs based on external signals. Use a belt-and-braces setup: robots.txt to reduce crawling, plus noindex meta tags or X-Robots-Tag HTTP headers on any application pages that could be discovered through external links.

The second major crawlability killer is redirect chains. SaaS companies rebrand, restructure, and pivot more than most businesses, and every change adds redirects. Over time, they stack: Page A redirects to Page B, which redirects to Page C, which redirects to Page D. Google follows up to about 10 redirects in a chain, but each hop wastes crawl budget and bleeds link equity. We routinely find SaaS sites with three to five hop redirect chains on their most important commercial pages. The fix is simple, but it takes discipline: audit redirects quarterly and flatten chains so every old URL points straight to the final destination.

How to Audit Your SaaS Crawl Budget in Google Search Console

Google Search Console gives you enough data to spot crawl budget issues, as long as you pull the right reports and read them the right way.

Start with the Crawl Stats report under Settings. It shows three metrics over the past 90 days: total crawl requests, total download size, and average response time. Two patterns matter most for SaaS. One: a high crawl request count combined with a low number of indexed pages, which signals Googlebot is spending time on URLs that never make it into the index. Two: average response time above 500ms, which points to server performance issues that cap your crawl rate limit.

That crawl activity should line up with what you're choosing to index. Next, open the Pages report (formerly Coverage report) under Indexing. Filter by "Crawled - currently not indexed" and "Discovered - currently not indexed." These statuses show URLs Google has found but declined to index. On SaaS sites, this list often fills up with low-value URLs: paginated archive pages, tag pages, parameter variations, and application URLs.

Export the report and bucket each URL by type. If more than 30% of your crawled-but-not-indexed URLs are pages you want indexed, you're dealing with content quality or weak crawl signals. If most of them are junk URLs, you're looking at a crawl budget problem you can fix with tighter robots.txt rules and noindex directives.

Cross-reference your Search Console data with a Screaming Frog crawl. Set Screaming Frog to respect your robots.txt, then compare what it finds with what Google reports crawling. The gaps matter. They show pages bots can access that shouldn't be accessible, or pages that should be accessible but got blocked by mistake. For a deeper look at common indexing issues and how to resolve them, the patterns we see on SaaS sites closely mirror those affecting other large, dynamic websites.

SaaS Indexation Issues: Why Your Best Pages Aren't Ranking

Getting crawled is step one. Getting indexed is step two. For SaaS teams, the gap between those two steps is where SEO stalls.

That gap shows up fast in Search Console as "Crawled - currently not indexed" on pages we actually need to rank. Googlebot hits the URL, processes what it sees, then decides it doesn't merit a spot in the index. On SaaS feature pages, the usual culprits are thin content, near-duplicate page sets, or JavaScript-heavy rendering that Googlebot doesn't execute the way we expect.

Feature pages trigger this all the time. A typical one ships with a headline, three sentences, a screenshot, and a CTA. To Google, that looks close to a doorway page: low text, low context, nothing that proves the page answers a real query. The page doesn't show depth, it doesn't cover the obvious follow-ups, and it gives Google almost nothing to classify. A feature page with 800+ words of real explanation, a use-case scenario, a comparison to alternative approaches, embedded video, and internal links to related documentation usually gets indexed. The thin version usually doesn't.

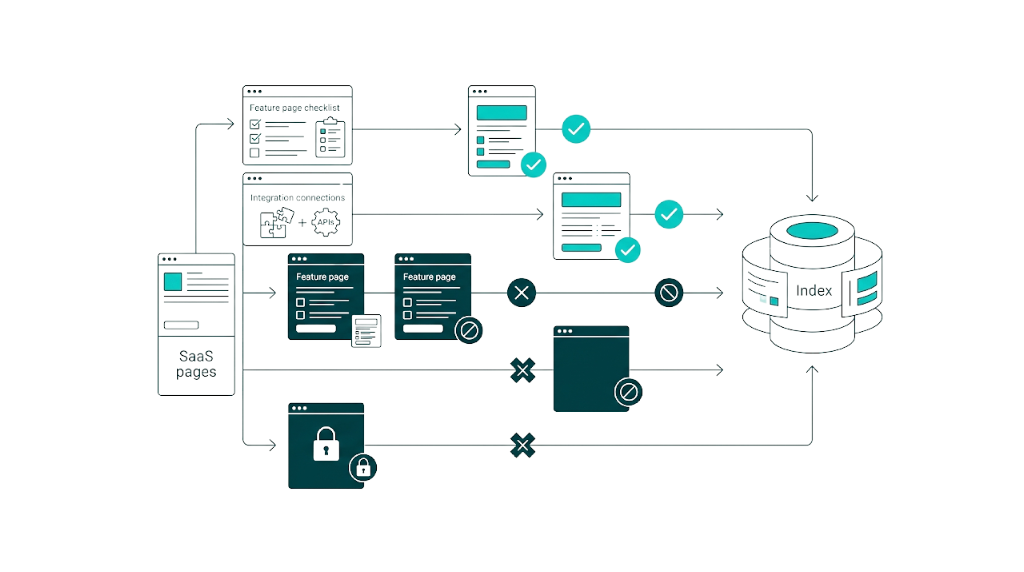

Auto-generated integration pages are the single biggest indexation trap for SaaS companies. If our product integrates with 200 tools and we publish an /integrations/[tool-name] page for each one, Google indexes only a slice. Look at those pages with a cold eye: they're templated, and the only unique elements are the partner name and logo. The body copy stays identical or close to it across all 200 URLs. Google recognizes the pattern and bins most of the set as duplicate or low-value.

That templated set still doesn't mean we should noindex everything. Some integration pages target keywords with real buying intent. The move is to prioritise: pick the 20 to 30 integrations with search volume by checking Ahrefs or Semrush for "[your product] + [integration name]" queries, then build those pages out properly. Spell out how the integration works, the exact use cases, the configuration steps, and real customer quotes. The remaining 170+ pages should either roll up into a single /integrations/ directory page or stay as internal navigation pages instead of organic landing pages.

Internal navigation is fine, but login-gated content creates another indexation problem unique to SaaS. If our help docs, knowledge base, or community forum sits behind authentication, Google can't index it. Sometimes that's the point. More often, teams gate content by default and block pages that could pull in high-intent research traffic. Audit the gated sections and sort content by job: if it helps a potential customer evaluate the product, publish it. If it only serves existing users, keep it behind the login.

Canonical Tags for SaaS: Solving Duplicate Content Across Feature and Integration Pages

Canonical tags tell Google which version of a page is the "master" copy when multiple URLs carry similar or identical content. On SaaS sites, canonical setups break all the time, and the damage stays quiet until rankings flatten.

Duplicate content usually starts with feature pages accessible through multiple URL paths. Picture a project management tool with a feature called "Gantt Charts." That feature might live at /features/gantt-charts, show up inside /solutions/project-managers (with the same Gantt Charts copy), and appear again on /use-cases/construction (also highlighting Gantt Charts). Without clean canonicals, Google sees three pages competing for the same theme and splits signals across all three.

The fix depends on how much of the page is shared. Each page should carry a self-referencing canonical pointing at its own URL, but only when the content on that page stands on its own. If the Gantt Charts section is substantially duplicated across the other URLs, pick the primary target page and canonicalize the others to it:

<!-- On /solutions/project-managers -->

<link rel="canonical" href="https://www.example.com/features/gantt-charts" />

<!-- On /use-cases/construction -->

<link rel="canonical" href="https://www.example.com/features/gantt-charts" />

<!-- On /features/gantt-charts (self-referencing) -->

<link rel="canonical" href="https://www.example.com/features/gantt-charts" />Shared content also sneaks in through parameters. Parameter-based canonical issues hit SaaS pricing pages that change via query strings like ?plan=enterprise and ?billing=annual; those variants need canonicals pointing back to the base URL. UTM parameters belong on the clean URL too via self-referencing canonicals, yet we still see SaaS sites where Google has indexed dozens of UTM-tagged homepage copies.

Canonicals only work when the rest of the site agrees. One critical rule: canonical tags are hints, not directives. Google ignores them when our other signals conflict. If the canonical points to Page A but internal links, the sitemap, and backlinks point to Page B, Google usually picks Page B. Align the signals and canonicals start doing their job.

SaaS Site Architecture: How to Structure Your URLs for Maximum Crawl Efficiency

Site architecture is where technical SEO meets commercial strategy. A SaaS site's URL structure isn't just a filing system for search engine bots. It's a map of the buyer journey, and getting it right decides which pages build authority, which pages rank, and how cleanly Googlebot moves through the site.

The best SaaS URL taxonomy maps directly to buyer journey stages. Here's the framework we recommend:

URL Tier | Example Path | Buyer Journey Stage | Purpose |

|---|---|---|---|

Homepage | / | All stages | Brand entry point, authority hub |

Solution pages | /solutions/[persona-or-use-case]/ | Awareness | Attract top-of-funnel searches by persona |

Feature pages | /features/[feature-name]/ | Consideration | Target mid-funnel feature-specific queries |

Comparison pages | /vs/[competitor-name]/ | Decision | Capture high-intent competitive searches |

Integration pages | /integrations/[tool-name]/ | Consideration/Decision | Target integration-specific queries |

Pricing | /pricing/ | Decision | Convert bottom-of-funnel visitors |

Blog | /blog/[post-slug]/ | Awareness/Consideration | Drive informational traffic and build authority |

Resources | /resources/[type]/[slug]/ | Consideration | Gate content for lead generation |

This setup does a few jobs at once. It creates clean, predictable URL patterns that Googlebot crawls without friction. It groups related content under shared directory paths, which strengthens topical authority signals. And it gives our SEO team a simple rulebook for where new pages go as the site grows. That matters once you're shipping new pages every week.

Flat architecture beats deep nesting for SaaS sites. Keep it shallow. Every page should sit within three clicks of the homepage.

If blog posts live at /blog/category/subcategory/year/month/post-slug, that's too deep. Googlebot deprioritizes deeply nested pages, and link equity from the homepage thins out with every directory level. Flatten it to /blog/post-slug, then use internal linking and on-page categories for navigation instead of burying content in the URL.

The /vs/ directory needs special attention because it tends to drive revenue. Comparison pages convert because they match intent from searchers already evaluating alternatives. That same directory also causes thin content issues fast.

A /vs/ page that only shows a feature checklist in a table won't hold indexation. The /vs/ pages that rank and keep ranking run 1,500+ words, cover where each product wins by use case, and add original data or customer perspective. Keep the structure tight under /vs/[competitor]/, then link to those pages from features and solutions where they belong.

The subfolder approach extends to your documentation and blog. The blog and docs placement is part of the same architecture decision. If your blog sits on blog.example.com and docs sit on docs.example.com, you're splitting domain authority across properties. Moz's research on subdomains vs. subfolders confirms that subfolders typically consolidate authority more effectively for most sites.

A thorough SaaS link building strategy will flag this right away. Move both to example.com/blog/ and example.com/docs/. The migration takes careful redirect mapping and close monitoring, but the authority consolidation upside is well-documented and material.

Internal Linking Strategy for SaaS: Connecting Features, Solutions, and Blog Content

Internal links are the circulatory system of site architecture. They push link equity, clarify topical relationships, and steer Googlebot toward the pages that matter. For SaaS, internal links also connect informational blog content to commercial feature pages so traffic doesn't dead-end. Understanding the power of internal link building is essential before you start mapping out your hub-and-spoke structure.

The hub-and-spoke model works well for SaaS. Feature pages act as hubs. Blog posts, documentation pages, and use-case pages act as spokes.

Each spoke links back to the right hub using specific anchor text. A blog post about "how to improve team productivity with Gantt charts" should link to /features/gantt-charts with anchor text like "Gantt chart feature" or "our Gantt chart tool," not filler like "learn more" or "click here."

Those hub links need to ship with the content, not get added later. Every new blog post should include at least two to three internal links to relevant feature or solution pages. Every new feature page should pick up internal links from at least five existing blog posts within the first week of publication. No exceptions. Without those links, new pages sit isolated, build no internal authority, and take months longer to rank.

Here's a practical linking hierarchy for a SaaS site:

- Homepage links to all solution and feature category pages

- Solution pages link to relevant feature pages and blog posts

- Feature pages link to related features, relevant integrations, and comparison pages

- Blog posts link to feature pages, solution pages, and other related blog posts

- Comparison pages link to feature pages and pricing

Breadcrumbs also pull weight here. Use breadcrumb navigation to reinforce URL structure and give Googlebot extra context on where each page sits. Add BreadcrumbList schema so Google can show breadcrumbs in search results and lift click-through rate.

Core Web Vitals for SaaS Websites: The Performance Benchmarks That Actually Matter

In March 2024, Google officially replaced First Input Delay (FID) with Interaction to Next Paint (INP) as the responsiveness metric in Core Web Vitals. Most SaaS technical SEO guides still reference FID. That advice is stale. INP measures latency across all interactions over a page's lifecycle, not just the first one, and it hits the JavaScript-heavy builds we see on a lot of SaaS marketing sites.

Here are the current Core Web Vitals thresholds, as documented on Google's web.dev:

Metric | Good | Needs Improvement | Poor |

|---|---|---|---|

Largest Contentful Paint (LCP) | ≤ 2.5s | ≤ 4.0s | > 4.0s |

Interaction to Next Paint (INP) | ≤ 200ms | ≤ 500ms | > 500ms |

Cumulative Layout Shift (CLS) | ≤ 0.1 | ≤ 0.25 | > 0.25 |

INP is where SaaS sites fail most often. The pattern is consistent. SaaS marketing pages ship React, Vue, or Angular components pulled straight from the product design system, and those components come with event listeners, client-side state, and DOM updates that block the main thread at the worst possible moment.

A visitor clicks a pricing toggle. The browser waits 350ms to repaint because the JS bundle is chewing through logic and re-render work. That fails INP, and most dev teams miss it because they test on high-end laptops on clean networks.

INP failures require collaboration between SEO and engineering teams, because the fixes live in the frontend code.

Start with these priorities:

- Break up long JavaScript tasks. Any task that blocks the main thread for more than 50ms needs splitting into smaller chunks using

requestIdleCallbackorsetTimeoutso the browser can breathe. - Lazy-load non-critical interactive components. A demo request modal or pricing calculator that isn't visible on page load shouldn't ship its JavaScript on first paint. Load it on scroll or on interaction with a trigger element.

- Reduce third-party script impact. Analytics, chat widgets, heatmap tools, and A/B testing scripts all fight for main thread time. Audit every third-party script, then defer or remove anything that doesn't serve the page's job.

LCP problems on SaaS sites usually come from hero images and above-the-fold content that depends on JavaScript rendering. If the hero loads a background image via a CSS class injected by JavaScript, the browser can't even start the download until the JS runs. Move critical images into standard <img> tags with fetchpriority="high" and loading="eager". Serve images in WebP or AVIF. Use responsive srcset so each viewport gets the right size.

That same above-the-fold area also drives most layout stability pain. CLS issues show up when pricing tables, testimonial carousels, or embedded videos load without reserved dimensions. Always set width and height on images and iframes, and use CSS aspect-ratio for containers that resize.

JavaScript Rendering Issues: Why Your SaaS Product Pages May Be Invisible to Google

Google processes JavaScript-rendered pages in two phases. First, it crawls the HTML and follows any links in the initial response. Second, it queues the page for rendering, executes the JavaScript, then processes the resulting DOM. According to Google's JavaScript SEO documentation, this second phase can be delayed by seconds, hours, or even days depending on rendering queue capacity. Search Engine Journal's coverage of JavaScript SEO best practices outlines how this two-phase process affects indexation timelines and what teams can do to reduce rendering delays.

That rendering queue is where CSR marketing pages get burned. On a client-side rendered build, Googlebot's first pass often sees an empty <div id="root"></div> with no content. The page content only appears after the rendering phase, and if rendering fails or gets delayed, the page doesn't make it into the index in any meaningful way.

The solution hierarchy for JavaScript rendering issues:

- Server-Side Rendering (SSR): Render the full HTML on the server before sending it to the browser. This is the gold standard. Frameworks like Next.js (React) and Nuxt.js (Vue) make SSR implementation straightforward.

- Static Site Generation (SSG): Pre-render pages at build time. Ideal for SaaS marketing pages that don't change often. Gatsby, Next.js, and Hugo all support this approach.

- Dynamic Rendering: Serve a pre-rendered HTML version to bots while serving the JavaScript version to users. Google has described this as a "workaround" rather than a long-term solution, but it's a pragmatic fix when SSR isn't feasible in the short term.

- Hybrid Rendering: Use SSR or SSG for marketing pages and CSR only for the authenticated application. This is the pattern most mature SaaS companies adopt.

Validate the setup in Google's URL Inspection Tool in Search Console. Click "Test Live URL", then "View Tested Page", and review the rendered HTML Googlebot gets. Put that next to what a browser shows. If key content, headings, or internal links don't appear in the rendered version, the JavaScript rendering setup needs immediate attention.

XML Sitemaps for SaaS: What to Include, What to Exclude, and How to Structure Them

Your XML sitemap is a direct line to Google. It tells bots which URLs matter and when you last updated them. On SaaS sites with messy IA, a clean sitemap setup separates signal from noise fast.

Use a sitemap index file that references multiple specialised sitemaps. Google's sitemap documentation supports this approach, and SaaS teams need it because page types behave differently in crawl and indexation.

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://www.example.com/sitemap-pages.xml</loc>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-blog.xml</loc>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-integrations.xml</loc>

</sitemap>

<sitemap>

<loc>https://www.example.com/sitemap-docs.xml</loc>

</sitemap>

</sitemapindex>What to include: Every URL you want indexed that returns a 200 status code, uses a self-referencing canonical tag, and isn't blocked by robots.txt or a noindex directive. That covers feature pages, solution pages, comparison pages, blog posts, key integration pages, and documentation pages.

What to exclude: Application URLs, login pages, parameter variations, paginated archives, tag pages with thin content, staging or preview URLs, and any page with a noindex tag. Putting noindexed URLs in your sitemap sends mixed signals and burns Google's processing time.

Update your <lastmod> dates only when the page content changes. Google has stated that it ignores <lastmod> values when they aren't reliable, so if every URL shows today's date, Google learns to ignore your lastmod field. Keep each individual sitemap under 50,000 URLs and 50MB uncompressed, as per Google's documented limits. If you're unsure whether your current setup is working, running through a complete technical SEO checklist will surface any gaps before they compound.

Structured Data for SaaS: The Schema Markup Types That Drive Rich Results

Structured data helps search engines understand what a page is about and how it should appear. For SaaS companies, the right schema types can earn rich results that lift click-through rate in the SERP. Google's structured data documentation spells out what's supported and what Google expects to see.

The four schema types most relevant to SaaS websites:

1. SoftwareApplication. This is the main schema type for your product. Put it on your homepage or main product page.

{

"@context": "https://schema.org",

"@type": "SoftwareApplication",

"name": "YourProduct",

"operatingSystem": "Web",

"applicationCategory": "BusinessApplication",

"offers": {

"@type": "Offer",

"price": "49.00",

"priceCurrency": "USD",

"priceValidUntil": "2025-12-31"

},

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.7",

"ratingCount": "1250"

}

}2. FAQPage. Use this on pages with a real FAQ section. Feature pages, pricing pages, and comparison pages tend to fit well. FAQ rich results also take up more space in the SERP.

3. Article and BlogPosting. Use these on blog content. They help Google parse publication dates, authors, and content type, which can affect how content shows up in Google News and Discover.

4. BreadcrumbList. Use this site-wide to reinforce your URL hierarchy in search results.

{

"@context": "https://schema.org",

"@type": "BreadcrumbList",

"itemListElement": [

{"@type": "ListItem", "position": 1, "name": "Home", "item": "https://www.example.com/"},

{"@type": "ListItem", "position": 2, "name": "Features", "item": "https://www.example.com/features/"},

{"@type": "ListItem", "position": 3, "name": "Gantt Charts", "item": "https://www.example.com/features/gantt-charts/"}

]

}Organization schema also belongs on your homepage. Include your company name, logo, social profiles, and contact information. This feeds Google's Knowledge Panel and strengthens entity recognition for your brand.

Validate all structured data using Google's Rich Results Test before you ship it. Invalid markup beats nothing in the wrong direction: Google ignores it, and in worse cases it can contribute to manual actions. And keep schema honest. If a feature page isn't an ecommerce listing, don't mark it as a "Product" and expect rich results.

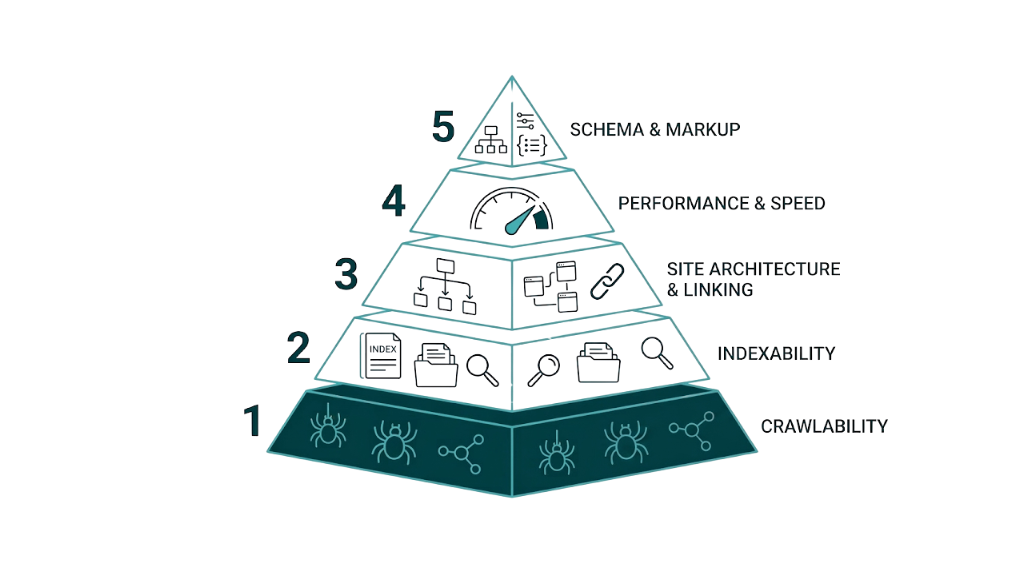

The SaaS Technical SEO Audit Checklist: A Prioritised Framework for Fixing What Matters First

This isn't a summary. It's a prioritised action framework.

Work through it in order, because each tier depends on the tier before it. Structured data won't move the needle if Googlebot can't crawl your pages.

Tier 1: Crawlability (Fix First)

- [ ] Audit robots.txt to block /app/, /dashboard/, /account/, /api/, and parameter URLs

- [ ] Add noindex tags or X-Robots-Tag headers to all application pages

- [ ] Flatten redirect chains to single hops (audit quarterly)

- [ ] Verify server response times are under 500ms in Search Console Crawl Stats

- [ ] Check that no critical marketing pages are blocked by robots.txt

Tier 2: Indexation (Fix Second)

- [ ] Review "Crawled - currently not indexed" URLs in Search Console and categorise by page type

- [ ] Audit canonical tags across all feature, solution, and integration pages

- [ ] Identify and consolidate or improve thin integration pages

- [ ] Ensure login-gated content that should drive organic traffic is publicly accessible

- [ ] Remove noindex tags from any pages you want ranking

Tier 3: Site Architecture (Fix Third)

- [ ] Migrate blog and docs from subdomains to subfolders if applicable

- [ ] Flatten URL depth to three clicks maximum from homepage

- [ ] Implement hub-and-spoke internal linking between blog content and feature pages

- [ ] Add breadcrumb navigation with BreadcrumbList schema

- [ ] Ensure every new page receives at least five internal links within one week of publication

Tier 4: Performance and Rendering (Fix Fourth)

- [ ] Test INP scores on all key landing pages using Chrome UX Report data

- [ ] Implement SSR or SSG for all marketing pages using JavaScript frameworks

- [ ] Verify rendered HTML in Search Console URL Inspection Tool for critical pages

- [ ] Optimise LCP by moving hero images to standard

<img>tags withfetchpriority="high" - [ ] Audit and defer non-critical third-party scripts

Tier 5: Sitemaps and Structured Data (Maintain Ongoing)

- [ ] Create segmented XML sitemaps by page type

- [ ] Exclude noindexed, redirected, and parameter URLs from sitemaps

- [ ] Implement SoftwareApplication, FAQPage, Article, and BreadcrumbList schema

- [ ] Validate all structured data with Google's Rich Results Test

Most SaaS SEO checklist guides skip the part that hurts: technical SEO acts as the multiplier for every other organic investment. A page with clean on-page optimisation and ten high-quality backlinks generates zero traffic if it can't be crawled or indexed.

That crawl and index layer shows up in link building work, too. We see it with the B2B SaaS companies we support through curated link placements. Teams that fix technical foundations first get more out of every link we build. Teams that skip technical SEO and go straight to link acquisition build on sand.

Fix the foundation. Then amplify with links. That's the sequence that compounds.

Frequently Asked Questions

What is SaaS technical SEO and how does it differ from standard technical SEO?

SaaS technical SEO focuses on crawlability, indexation, and performance issues that show up on software-as-a-service sites. The problem set looks different in SaaS: JavaScript-rendered marketing pages, auto-generated integration and feature pages that create duplicate content at scale, login-gated app areas bleeding into crawl paths, and the subdomain sprawl you get when blogs, docs, and the app sit on separate subdomains.

Standard technical SEO usually assumes a site that doesn't change much. SaaS technical SEO assumes the opposite: a site that ships pages fast, changes often, and has a web app attached to it.

How do I fix crawlability issues on a SaaS website with a gated app section?

Block the entire application directory in your robots.txt file with Disallow: /app/ (or whatever your application path is). But don't rely on robots.txt alone.

Add noindex meta tags or X-Robots-Tag: noindex HTTP headers across all application pages as a second layer. External links that point to app URLs can still lead to indexing even when robots.txt prevents crawling. That happens.

Keep Search Console on a short leash. Audit it regularly for any /app/ URLs showing up in the index, and file removal requests for anything that slips through.

Why are my SaaS feature pages not being indexed by Google?

This usually comes down to thin content, duplicate content across similar feature pages, or JavaScript rendering issues. If a feature page has fewer than 300 words of unique text plus a screenshot and a CTA button, Google often won't see enough value to index it.

Start in Search Console. Check the "Crawled - currently not indexed" report, then run the URL through the URL Inspection Tool to confirm Googlebot can render the full page. If the rendered HTML comes back empty or missing key sections, that's a JavaScript rendering issue and you need server-side rendering.

What is the best URL structure for a SaaS website?

Use a flat, subfolder-based structure that matches your buyer journey: /solutions/ for awareness-stage persona pages, /features/ for consideration-stage feature pages, /vs/ for decision-stage comparison pages, /integrations/ for tool-specific pages, /blog/ for informational content, and /docs/ for documentation.

Keep everything on the primary domain instead of splitting key sections across subdomains. Page depth matters too. Every page should be reachable within three clicks from the homepage.

How does JavaScript rendering affect SaaS SEO and how can I fix it?

Google processes JavaScript in two phases. First it crawls the raw HTML, then it queues the page for rendering. If your marketing pages depend on client-side JavaScript to show core content, Googlebot's first pass sees a blank page.

That second rendering step can take hours or days, and it sometimes fails. The clean fix is server-side rendering (SSR) or static site generation (SSG) across all marketing pages. Frameworks like Next.js and Nuxt.js make this straightforward.

Confirm the output, not the theory. Use Google's URL Inspection Tool and make sure the rendered HTML matches what users see in their browsers.

related Blog Posts

Join 2,600+ Businesses Growing with Rhino Rank

Sign Up

Stay ahead of the SEO curve

Get the latest link building strategies, SEO tips and industry insights delivered straight to your inbox.

Back to all posts

Back to all posts