Crawl budget is one of those technical SEO concepts that gets mentioned in audits, dropped into slide decks, and then left unexplained. Most SEO guides treat it as a checklist item: block your faceted navigation, fix your redirects, submit a sitemap. Done. But that surface-level treatment misses the mechanism entirely, and for teams managing sites with tens of thousands of URLs, missing the mechanism costs real indexing performance.

The bottom line: Crawl budget is the number of URLs Googlebot will crawl on your site within a given timeframe, determined by two interacting factors - your server's capacity to handle search engine requests and Google's assessment of how valuable your pages are. Sites with wasted budget see slower indexing, delayed ranking updates, and pages that simply don't get recrawled often enough. Fixing it means understanding both sides of that equation, and one of the most underused levers for increasing crawl demand is the same one RhinoRank clients use for rankings: building high-quality external links to priority pages.

This guide goes deeper than the standard overview. We'll cover how Google's internal crawl demand and capacity calculations work, identify the patterns that waste budget most, and give you a framework for diagnosing and fixing crawl inefficiency on complex sites. We'll also spell out the connection between link building and crawl frequency that Google's own documentation confirms but almost no SEO guide explains clearly.

What Is Crawl Budget? The Definition Google Actually Uses

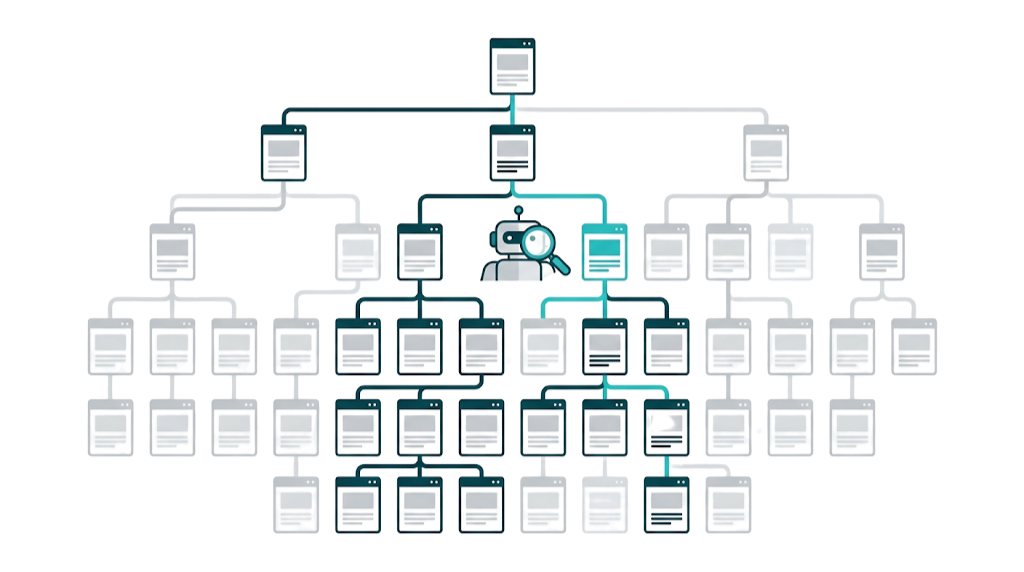

The term "crawl budget" doesn't appear in Google's early documentation as a formal concept. It emerged as shorthand, and Google Search Central eventually formalized it. According to Google's official crawl budget documentation, crawl budget is defined as "the number of URLs Googlebot can and wants to crawl" on a given site.

That phrasing matters. "Can" and "wants to" do different jobs. They map to two internal systems: crawl capacity limit and crawl demand. Most SEO guides mash these into one fuzzy idea, which is why their advice ends up half-finished. You can tighten server response times and still see weak crawl coverage if your pages have low crawl demand. And you can have high-demand pages that Googlebot still hits less often because your server can't take the load without hurting user experience.

Crawl budget in 2025 also needs to account for how crowded server resources have become. Cloudflare's crawler traffic data shows that Googlebot traffic alone rose 96% from May 2023 to May 2024. AI crawlers, search engine bots, and monitoring tools compete for the same capacity. A site that handled bot traffic comfortably two years ago may now be throttling Googlebot without realising it, because the overall bot load has almost doubled. This isn't a legacy concern. It's an infrastructure problem affecting crawl budget right now.

Google's documentation also spells out where crawl budget matters most: sites with large page counts, sites that publish often, or sites with lots of URLs that read as low value. For a 500-page brochure website, crawl budget rarely limits growth. For a 200,000-page e-commerce catalogue or a news site publishing dozens of articles daily, it becomes operational.

Those two subsystems deserve separate attention.

Crawl Capacity Limit: How Your Server Speed Shapes What Google Crawls

Crawl capacity limit is Google's self-imposed ceiling on how aggressively Googlebot will crawl your site. Google doesn't want to overload your servers, so it watches how your infrastructure responds and adjusts crawl rate to match.

Two signals drive this: server response time and error rates. If your server returns pages quickly and consistently, Googlebot crawls harder. If responses slow down, time out, or throw 5xx errors, Googlebot backs off. Seobility's crawl budget optimization benchmarks put the target at 500ms or faster for server response times. Push past that threshold and you're giving up crawl capacity.

Google lets you influence this a bit. Search Console allows site owners to set a preferred crawl rate - and Google may ignore it - but reducing crawl rate is almost always the wrong call. The bigger win comes from fixing performance at the source: stronger hosting, CDN usage, and removing server-side bottlenecks that drag response times up.

Crawl capacity limit also isn't static. It moves with real-time server health. If your site slows down during peak hours, Googlebot often pulls back at the exact moment you're publishing new content. Running a technical SEO audit before tackling crawl budget gives you a clean picture of where server-side issues are hiding.

Crawl Demand: Why Google Decides Your Pages Are Worth Revisiting

Crawl demand is the other half of the equation, and it's where most practitioners underinvest. A fast server helps, but Googlebot still won't spend time on pages it doesn't see as worth revisiting. Google sets crawl demand using a handful of signals, and Google Search Central's crawl budget guidance spells them out.

Two signals matter most: URL popularity and staleness.

Staleness is simple. If a page changes often, Google recrawls it more often so the index doesn't drift.

URL popularity is the commercial bit. Google uses the number and quality of links pointing to a URL - internal and external - as a direct signal of importance. Popular URLs get crawled more.

That link sits right at the center of crawl budget. This isn't a theory. Google documents the behavior: pages with more high-quality backlinks receive higher crawl demand. A page buried in the architecture with zero external links might get a monthly crawl. Put ten strong referring domains behind that same URL and weekly recrawls become realistic, sometimes more. We'll come back to this later, because it's the most actionable (and most ignored) point in this whole topic.

Does Crawl Budget Actually Matter for SEO? (And for Which Sites)

The answer depends on scale and complexity, and the cutoff is lower than most teams think.

Google's guidance frames crawl budget as a real constraint once you pass more than a few thousand URLs. Under that, Googlebot often gets through the site in a reasonable window even without much tuning. But "a few thousand URLs" shows up fast in the real world. An e-commerce site with 50 product categories, 10 filter options each, and session-based URL parameters can generate hundreds of thousands of unique URLs without adding a single product. A media site with tag pages, author archives, date-based archives, and paginated series hits the same wall quickly.

For a mid-market SaaS team running a 15,000-page documentation site, crawl budget isn't abstract. If Googlebot burns 40% of its crawl allocation on paginated archive pages and parameter variants, that's 40% less crawl capacity for new feature docs, updated pricing pages, and fresh blog content. Indexing slows down. Ranking changes land later. And in competitive SERPs where freshness matters, that delay costs money.

Sites that consistently need to worry about crawl budget:

- E-commerce sites with faceted navigation and filter parameters

- News and media sites publishing high volumes of content daily

- Large documentation or knowledge base sites with deep hierarchies

- Enterprise sites with multiple subdomains, international variants, and legacy URL structures

- Any site that has undergone multiple migrations without proper cleanup

Smaller websites aren't off the hook. A 2,000-page site still runs into crawl budget trouble if a big chunk of its URLs are thin, duplicated, or throwing errors. Googlebot doesn't just count pages - it reacts to quality signals as it crawls. If 60% of crawled URLs resolve to low-quality or duplicate content, crawl demand drops over time.

How Crawl Budget Affects Indexing Speed and Rankings

Crawl budget and indexing are tied together. A URL can't enter the index until Google crawls it. And if Google hasn't recrawled a page in a while, the index can lag behind reality - outdated content, missing new structured data, or even a version of the page that no longer exists.

This shows up in day-to-day operations. New pages on large sites sit unindexed for days or weeks when crawl allocation gets soaked up by low-value URLs. Updates - price changes, new schema markup, revised title tags - don't hit the SERP until the next crawl. And deleted pages that still get crawled keep draining budget that should go to live pages.

Rankings take the hit indirectly, but the impact is real. Google can only rank what it has indexed, and it can only index what it has crawled. If your priority commercial pages get recrawled monthly while a competitor's pages (with stronger link profiles) get recrawled daily, their updates reach Google's systems faster. In verticals where freshness drives rankings - news, product availability, local business information - that crawl frequency gap turns into a rankings gap. Understanding how link building helps SEO makes it easier to see why crawl frequency and ranking authority are two sides of the same coin.

Crawl frequency also compounds over time. Pages that get crawled often tend to build stronger quality signals inside Google's systems because Google keeps re-evaluating them. Ranking changes, good or bad, propagate faster. Pages that rarely get crawled sit outside that feedback loop for long stretches.

The connection to link building is direct here. A strong backlink to a priority page doesn't just pass PageRank - it tells Google the URL deserves more attention. Higher crawl demand means faster indexing of updates, quicker propagation of ranking signals, and steadier visibility in search results. We break down the mechanics in a dedicated section below.

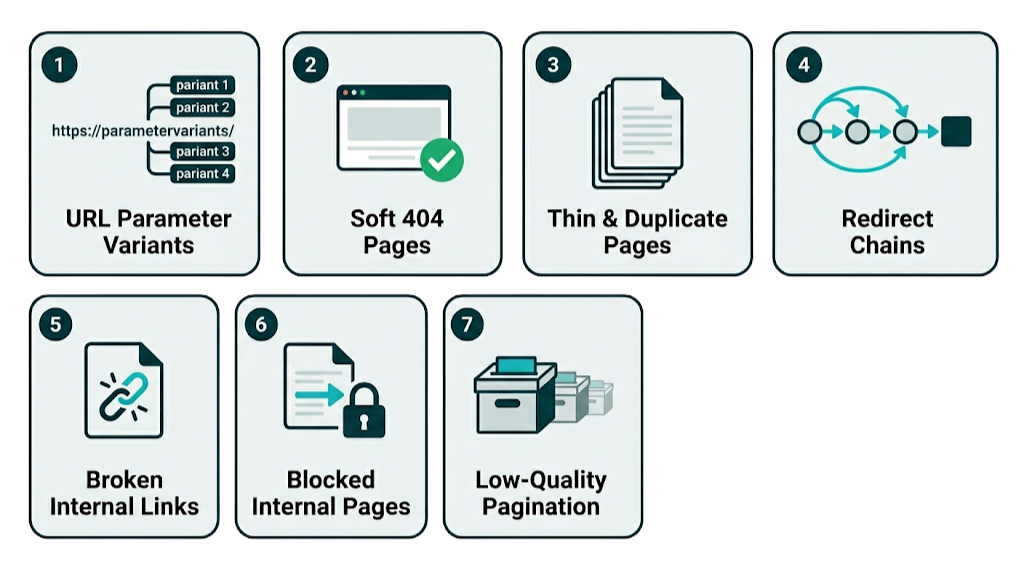

The 7 Biggest Crawl Budget Killers (And How to Diagnose Each One)

Most crawl budget waste comes from predictable, fixable patterns. On technical SEO audits, we keep seeing the same issues show up and soak up crawl activity. Below are the seven biggest crawl budget killers, ranked by severity based on what we run into most often.

1. URL parameters generating duplicate content

This is the biggest budget killer on e-commerce and CMS-driven sites. Sorting parameters (?sort=price-asc), session IDs (?sessionid=abc123), tracking parameters (?utm_source=email), and filter combinations all create unique URLs that serve near-identical or identical content. Googlebot treats each variant as a separate URL and will crawl it that way.

That adds up fast. On a site with 10,000 products and 8 filter options, the combinations can create millions of crawlable URLs.

2. Soft 404 pages

A soft 404 is a page that returns a 200 OK HTTP status code but shows content that amounts to "page not found" - empty search results, "no products found" states, or placeholder pages. Googlebot still crawls them, hits low-value content, and burns budget on pages that shouldn't exist in the first place.

They're also harder to spot than hard 404s because the server reports success. The result: lots of crawl activity with nothing to show for it.

3. Thin and duplicate content pages

Tag pages with one post. Author archive pages for authors who've published twice. Paginated pages beyond page 3 or 4 with almost no unique content. Boilerplate landing page variants. These pages dilute crawl budget because Google still spends time crawling and evaluating them.

Google doesn't just skip these - it crawls them, assesses them, and rolls that quality signal into its view of the site overall. Thin and duplicate pages also make it harder for the pages that matter to get recrawled at a steady pace.

4. Redirect chains

A URL that redirects to another URL that redirects to a third URL forces Googlebot to follow multiple hops before it reaches the final destination. Each hop uses crawl budget, and it also slows down discovery across the rest of the site.

Chains of three or more redirects do the most damage. They're also common on sites that went through a migration and never got a full redirect audit afterward.

5. Broken internal links (4xx errors)

Internal links pointing to 404 pages waste crawl budget on dead ends. They also signal sloppy site maintenance, which doesn't help a technical quality profile. A link audit will surface these broken internal links alongside any external backlink issues, making it a useful starting point before deeper crawl budget work.

A site with hundreds of broken internal links spends budget on URLs that return nothing useful. Fixing these tends to be unglamorous, but it pays back immediately.

6. Blocked resources in robots.txt that are still linked internally

If your robots.txt blocks a URL but your internal links still point to it, Googlebot will discover it and try to crawl it anyway. It won't fetch the blocked URL, but discovery plus attempted access still consumes resources.

That internal linking is the root problem here. And if external links point to the blocked URL, it can still show up in the index, which adds another layer of mess to clean up.

7. Low-quality paginated series

Infinite pagination. Deep pagination on thin category pages. Paginated archives with no unique value beyond the first two pages. All of it pulls Googlebot into low-return crawl territory.

A blog with 800 posts paginated at 10 per page generates 80 archive pages, most of which offer minimal crawl value. Pagination like this doesn't look broken, but it quietly drains crawl attention away from pages that move traffic and revenue.

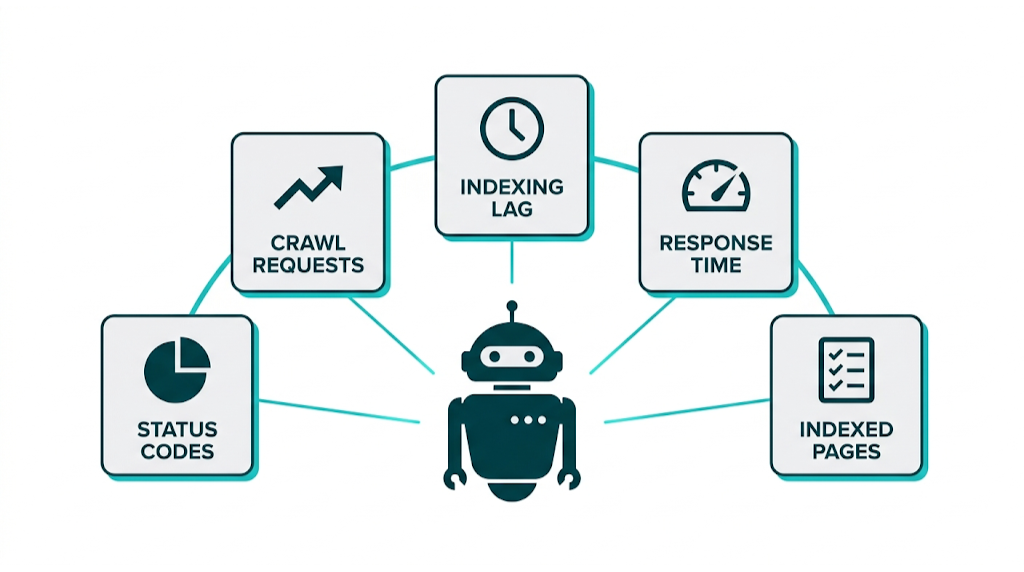

How to Use Google Search Console's Crawl Stats Report to Find Waste

Google Search Console's Crawl Stats report is the main diagnostic tool for crawl budget analysis. To access it: navigate to your GSC property, click Settings in the left sidebar, then select Crawl Stats. You'll see a dashboard showing Googlebot's crawl activity over the past 90 days, which gives you a clean baseline before you start chasing problems.

The key metrics to analyse:

- Total crawl requests: The total number of URLs Googlebot is trying to crawl

- By response: The split of 200s, 301s, 404s, and other status codes. A high share of non-200 responses points to budget waste

- By file type: If Googlebot spends a lot of budget on CSS, JS, or image files, confirm those resources need to be crawled

- Crawl response time: Average time in milliseconds. If it stays above 500ms, dig into server-side causes

- By Googlebot type: Separates Googlebot Smartphone, Googlebot Desktop, and other Google crawlers

The response code breakdown is where the waste shows up. If 20% of crawl requests return 404s, then 20% of the budget goes to dead pages. If 15% return 301 redirects, Googlebot is spending time on hops that you can usually collapse. That breakdown also sets up the next step: cross-reference it against sitemap submissions to spot gaps between what you're asking Google to crawl and what it's spending time crawling in reality. Pairing this with Google Search Console tips helps you get more out of the data GSC surfaces.

Using Server Log Analysis to See Exactly What Googlebot Is Crawling

GSC Crawl Stats gives us the summary. Server logs give us the receipts.

Our server logs capture every Googlebot request, down to the exact URL, timestamp, status code, and response time. That detail matters because it lets us pin crawl waste on specific URL patterns instead of guessing from aggregates.

Screaming Frog Log File Analyser and Semrush Log File Analyser are the two main tools for working with logs at scale. Both let us filter by user agent to isolate Googlebot, segment by URL pattern, and see which parts of the site burn the most crawl budget.

What to look for in our logs:

- URL patterns showing up hundreds or thousands of times - parameter variants, pagination loops, and other duplicates

- URLs returning 404 or 500 errors that Googlebot keeps hitting anyway

- Site sections pulling a lot of crawl attention without pulling their weight

- New or updated pages still not crawled even though they've been live for weeks

That gap - between "pages Googlebot crawls most" and "pages we want crawled" - is the crawl budget problem statement. Everything in our optimization strategy should point back to closing it.

How Internal Linking and Link Building Directly Influence Crawl Budget

Most crawl budget guides skip this, and it's the part that ties to revenue.

Google's crawl budget documentation spells it out: crawl demand is influenced by URL popularity, and Google gauges that popularity using the same signals it uses for PageRank - mainly the number and quality of links pointing to a URL. That includes internal links and external backlinks. Strong link signals push Google to crawl a URL more often. Weak signals do the opposite.

Those link signals start inside the site. Pages buried deep in the architecture, with only a couple internal links pointing in, end up with low crawl demand. We fix that by pushing priority pages closer to the surface with contextual links in body content, cleaner navigation, and hub-and-spoke content structures. The power of internal link building is often underestimated here - a well-structured internal linking strategy does as much for crawl demand as it does for PageRank distribution. Do that well and Googlebot revisits those pages more often.

That same URL popularity signal also comes from off-site links, and this is where most writeups stay vague. When a high-authority site links to a specific page, Google treats that URL as more popular. Popularity feeds crawl demand. Crawl demand drives recrawls. Recrawls mean changes hit the index faster. Price updates, schema tweaks, and refreshed copy show up sooner because Google comes back sooner.

For RhinoRank's clients, that makes link building to priority pages a dual-purpose investment. Yes, it builds PageRank and ranking authority. It also lifts crawl frequency on the pages that matter commercially. If a product category page picks up three strong referring domains from relevant industry sites, it won't just rank better - it gets crawled more often, so price changes, new product additions, and schema updates appear in search results faster.

That brings us back to planning. A link building campaign should prioritize pages that are commercially important and currently under-crawled. We pull Crawl Stats to spot high-value pages with low crawl frequency, then aim outreach and content placements at those exact URLs. It's tighter, easier to justify, and it beats generic, domain-only link building. Our Managed Service is built around exactly this kind of targeted, priority-page approach rather than scattershot domain-level campaigns.

That same prioritisation has to hold internally too. A strong internal linking structure keeps PageRank and crawl demand flowing to the pages we actually want indexed and refreshed. Orphaned pages - with zero internal links pointing to them - don't build crawl demand at all. After discovery, Google may not come back.

Crawl Budget Optimization: 8 Proven Techniques to Maximize Googlebot Efficiency

Crawl budget optimization isn't about reducing what Google crawls. It's about steering Googlebot toward the pages that drive outcomes. These eight techniques target the usual sources of crawl waste and the levers that lift crawl efficiency.

1. Audit and consolidate your URL parameter structure

Server logs tell the truth. Start there and pull a list of parameter-based URL variants that keep getting hit.

If parameters create duplicate pages, we consolidate with canonical tags back to the clean URL. If parameters have zero indexing value - session IDs and tracking parameters are the usual offenders - block them in robots.txt. Google deprecated the URL Parameter tool in Google Search Console, so canonicals and robots.txt do the work now.

2. Fix redirect chains and eliminate unnecessary redirects

Those parameter variants often sit alongside messy redirects. Run a redirect audit in Screaming Frog or any comparable crawler and flag chains immediately.

Any chain with three or more hops should collapse into a single, direct redirect. Then clean up internal links so they point straight to the final URL, not a redirect in the middle. That cuts crawl cost per visit and tightens PageRank flow.

3. Resolve soft 404s systematically

Redirect cleanup usually surfaces thin URLs, and soft 404s are the worst of them. Pull soft 404s from GSC's Coverage report, then confirm behavior in server logs.

A page that returns a 200 but shows "no results" needs a real decision. Either return a proper 404 or 410, redirect it to the closest relevant parent, or rebuild the page with content that earns indexing. The only thing we don't do is leave it as a 200 placeholder.

4. Implement a crawl-efficient XML sitemap

Once soft 404s are under control, the sitemap has to stop feeding Googlebot junk. Keep your XML sitemap tight: include only URLs you want indexed, and only those that return 200 status codes.

Drop paginated pages beyond page 1 unless they have clear, separate indexing value. Also exclude noindexed pages, redirected URLs, and parameter variants. A sitemap packed with 404s, redirects, or noindex pages sends Googlebot in the wrong direction and burns crawl budget. Keep validating against GSC's Sitemap report on a schedule, not once a quarter when something breaks.

5. Optimize server response times to stay below 500ms

A clean sitemap won't help if the server drags. Seobility's benchmarks put 500ms as the threshold for healthy crawl capacity; once you're over that, Googlebot throttles crawl rate to avoid stressing your infrastructure.

Fix what moves the needle:

- CDN for static assets.

- Server-side caching.

- Tune slow database queries on dynamic templates.

- If baseline response time stays high, change hosting or architecture instead of patching around it.

Those changes need follow-through. Track crawl response time in GSC Crawl Stats after each release so you can attribute improvements to specific work, not guess.

6. Restructure internal linking to surface priority pages

Server speed sets the ceiling, but internal linking controls demand. Map your commercial priority pages, then count internal links pointing to each one.

If a page has fewer than three internal links pointing to it, Google treats it as low priority from a crawl demand standpoint. Add contextual links from high-traffic, high-authority pages. Adjust nav so priority pages sit at a shallower depth. Keep important URLs within three clicks of the homepage. Three clicks. No more.

7. Use robots.txt to block genuinely low-value URL patterns

Internal linking pushes priority URLs up. Robots.txt keeps the low-value patterns out of the crawl path.

Admin pages, internal search results, login pages, and staging paths belong behind robots.txt disallows. Write specific rules for directories and patterns, not broad disallows that can trap real content. Before you ship it, run the file through GSC's robots.txt Tester so you don't learn the hard way.

8. Build external links to under-crawled priority pages

Robots.txt reduces waste. External links increase crawl demand where you want it.

Backlinks don't just help rankings. They pull Googlebot toward specific URLs. Use server logs and GSC Crawl Stats to find priority pages that Googlebot under-crawls, then put those URLs at the front of your link building outreach. Curated Links placed on relevant, high-authority pages are particularly effective here because they deliver both crawl demand signals and lasting link equity to the exact URLs that need it most. Most crawl budget advice skips this because it's harder than a technical checklist, but the payoff lasts because link equity compounds over time.

Robots.txt vs. Canonical Tags vs. Noindex: Choosing the Right Crawl Control Tool

This is where most guides get it wrong. These three tools are not interchangeable. They have different effects on crawling and indexing, and the wrong choice creates bigger problems than the one you're trying to fix.

Here's the decision framework:

Tool | Blocks Crawling? | Blocks Indexing? | Passes Link Equity? | Best Used For |

|---|---|---|---|---|

Robots.txt Disallow | Yes | No | No | Preventing Googlebot from crawling low-value URL patterns entirely |

Noindex meta tag | No | Yes | No | Allowing crawling but preventing indexing of pages with some internal value |

Canonical tag | No | No (redirects signals) | Yes (to canonical) | Consolidating duplicate or near-duplicate content signals to a preferred URL |

The critical misunderstanding: robots.txt does not prevent indexing. A URL blocked in robots.txt can still appear in Google's index if external links point to it. Google will index the URL but won't crawl the content, which leaves you with a thin, low-information listing. If a URL needs to come out of the index, noindex is the right tool - and Google has to crawl the page to see the noindex directive.

Canonical tags don't block anything either. They consolidate signals. Use them when multiple URLs serve the same or near-same content and you want one URL to receive credit. Canonicals don't save crawl budget in a direct way - Googlebot may still crawl variants - but they prevent index fragmentation and keep link equity flowing to the preferred URL.

The decision tree:

- Want to stop Googlebot crawling a URL entirely (and don't care if it appears in index)? Use robots.txt

- Want to allow crawling but prevent indexing? Use noindex (and allow crawling)

- Want to consolidate duplicate content signals without blocking access? Use canonical tags

- Want to remove a URL from the index completely? Use noindex and submit a URL removal request

Crawl Budget for E-Commerce Sites: Managing Faceted Navigation and Parameter URLs

E-commerce sites run into crawl budget limits faster than almost any other site type. Faceted navigation - the filter and sort systems that let users narrow product listings by colour, size, price, brand, and dozens of other attributes - produces huge volumes of URLs from a fairly small product catalogue.

It adds up fast.

Take a clothing retailer with 5,000 products across 20 categories. Add 8 filter dimensions with an average of 10 options each, and the combinatorial possibilities jump into the millions. Googlebot can't tell which combinations map to distinct, useful pages and which are parameter-built duplicates. If we don't spell it out, it crawls broadly and burns budget on filter combinations no one searches for, many of which return pages that look almost identical to the base category. The same principles that apply to ecommerce link building apply here: prioritize the pages that drive commercial outcomes and make sure Googlebot can find and recrawl them efficiently.

Most teams handle this with a mix of tactics:

- Canonical tags on faceted URLs pointing to the base category page (or to a specific faceted page if it has real search demand -

men's red running shoesmay have search volume worth targeting) - Robots.txt blocks on parameter patterns that add no unique value (session IDs, sort parameters, pagination beyond a defined depth)

- Noindex directives on faceted pages that help users navigate but shouldn't show up in search results

That mix only works when we apply it with intent. Not all faceted URLs are junk. A well-trafficked e-commerce site often finds that specific filter combinations - brand + category, for example - pull real search demand and deserve indexing. The goal isn't to block all faceted navigation. It's to choose which combinations earn crawl budget and index placement, then block everything else.

Pagination creates the same kind of waste. Category pages paginated beyond page 2 or 3 usually deliver low crawl value. Many teams add rel="next" and rel="prev" markup (Google dropped them as indexing signals, but they still add structural context) and pair that with canonical tags on paginated pages pointing to page 1. Some sites go further. They block paginated pages beyond a defined threshold in robots.txt.

Those decisions need proof. The key diagnostic step for e-commerce sites is to run server logs through a log analyser, then segment crawl activity by URL pattern. If parameter-based URLs account for more than 30% of Googlebot's crawl activity on the site, that's a clear budget leak and it cuts crawl coverage for core product pages.

How to Measure Crawl Budget Optimization Progress Over Time

Crawl budget optimization isn't a one-time fix. It needs ongoing monitoring because architecture shifts, new content ships, and Googlebot's behavior changes. The measurement framework has to stay consistent, or we can't trust the trendline.

Primary metrics to track monthly:

- Total crawl requests (GSC Crawl Stats) - should stabilise or rise after we remove low-value URLs

- Crawl response time (GSC Crawl Stats) - keep it under 500ms consistently

- Crawl response code distribution - track the share of 200s vs. 3xx, 4xx, 5xx over time

- Indexed pages vs. submitted pages (GSC Coverage report) - the gap between submitted and indexed URLs flags crawl or quality issues

- New page indexing lag - manually track how long newly published priority pages take to appear in Google's index

Secondary signals worth monitoring:

- Crawl frequency of specific priority URLs (from server logs filtered by URL)

- Changes in crawl depth distribution (confirm priority pages get crawled at a shallower depth after internal linking work)

- Correlation between new external links acquired and crawl frequency changes for those specific URLs

Start with a baseline before any changes go live. Capture the current state of each metric, roll out optimization changes in batches (not all at once, so attribution stays clean), then measure again at 30 and 90 days. Crawl pattern shifts don't happen overnight - Googlebot adjusts over weeks.

Faster indexing is the clearest win. If the publishing team sees new content show up in search results within hours instead of days, and ranking updates for optimized pages roll out faster, the crawl budget work is doing its job. Folding crawl budget metrics into your broader SEO reporting process keeps the data visible to stakeholders and makes it easier to justify ongoing investment in technical optimization.

Frequently Asked Questions About Crawl Budget

What is crawl budget and how does Google calculate it?

Crawl budget is the number of URLs Googlebot will crawl on your site within a given timeframe.

Google calculates it using two interacting systems: crawl capacity limit (how many requests your server can handle without degrading user experience) and crawl demand (how valuable Google considers your pages to be, based on signals like URL popularity and content freshness). Put those together and you get how much of your site Googlebot crawls and how often it revisits individual pages. Google's official definition and documentation are published at developers.google.com/crawling/docs/crawl-budget.

What is the difference between crawl rate limit and crawl demand?

Crawl rate limit (also called crawl capacity limit) is the ceiling on how aggressively Googlebot will crawl your site, based on your server's speed and reliability. Crawl demand is Google's assessment of how worth crawling your pages are, driven by their popularity (link signals) and how recently their content has changed.

Those two levers don't always move together. A site can have a high crawl rate limit but low crawl demand (fast server, low-value content), or high crawl demand but a constrained crawl rate limit (popular content, slow server). Solid crawl budget work deals with both.

How do I check how much crawl budget my site is using?

Start with Google Search Console's Crawl Stats report. Navigate to your GSC property, click Settings in the left sidebar, and select Crawl Stats. You'll see total crawl requests, response code distribution, crawl response times, and crawl activity by file type and Googlebot variant over the past 90 days.

That 90-day view is useful, but it won't tell you everything. For deeper analysis, server log files processed through tools like Screaming Frog Log File Analyser or Semrush Log File Analyser give you URL-level detail on what Googlebot is crawling, when, and with what response.

Does getting more backlinks increase crawl budget?

Yes. Google's crawl budget documentation confirms that URL popularity - measured using link signals, including external backlinks - is a primary driver of crawl demand. Pages with more high-quality backlinks get higher crawl demand, so Googlebot comes back more often.

That makes link building a two-for-one play: it builds ranking authority and increases crawl frequency for those specific URLs. Strong referring domains pointed at under-crawled but commercially important pages is one of the most effective and durable crawl budget optimization techniques available.

Should I use robots.txt or noindex to manage crawl budget?

It depends on your goal. Robots.txt blocks Googlebot from crawling a URL but does not prevent it from being indexed - a blocked URL can still appear in search results if external links point to it. Noindex allows crawling but prevents indexing - Googlebot must be able to access the page to read the noindex directive.

Use robots.txt to block URL patterns that have no indexing value and that you don't want crawled at all, like admin pages and parameter variants. Use noindex for pages with internal value that shouldn't appear in search results. Use canonical tags to consolidate duplicate content signals without blocking access.

These tools aren't interchangeable. Pick the wrong one and you create problems that are often harder to diagnose than the original issue.

How long does it take to see improvements after crawl budget optimization?

Crawl behavior changes aren't immediate. Googlebot adapts its crawl patterns over weeks, not days.

After implementing significant changes - blocking large volumes of low-value URLs, resolving redirect chains, improving server response times - expect to see changes in your GSC Crawl Stats data within 30 days, with more pronounced shifts visible at the 60-90 day mark. The clearest signal is faster indexing of new and updated priority pages.

Track new page indexing lag as your primary success metric. If content that previously took two weeks to index now appears within 48 hours, your optimization is working.

related Blog Posts

Join 2,600+ Businesses Growing with Rhino Rank

Sign Up

Stay ahead of the SEO curve

Get the latest link building strategies, SEO tips and industry insights delivered straight to your inbox.

Back to all posts

Back to all posts